Dynamics 365 F&O / DevOps and GitHub - ALM New Generation

Hello the Community!

After a long break, I'm excited to dive back in with a new, in-depth article. My experience working extensively with MCP, AI, Finance & Operations (F&O), and Business Process Automation (BPA), as well as years spent on Lifecycle Services (LCS), has set the stage for the major shift we’re witnessing now. We are moving into a new phase: the Unified ALM experience for Dynamics 365 Finance & Operations with also a lot of help with AI as Copilot is also a good fit to help building your YAML as we will see after 😊

With F&O now converging into the Power Platform Admin Center (PPAC), and the growing influence of GitHub and DevOps automation, it is time to reconsider our approaches to managing the application lifecycle. This transition covers everything from initial development to final deployment, introducing new tools and processes that streamline and modernize our workflows.

This article is the natural continuation of my previous post on the Unified Developer Experience (UDE), where I introduced the shift from LCS to PPAC and the new local development model. If you haven’t read it yet, I highly recommend checking it out here: https://www.powerazure365.com/dynamics-365-finops-unified-developer-experience.

By the way I always updated it from 2023, including the Unified ALM but here we will really cover everything from start, as you were just signed a new F&O project and start directly only in PPAC era.

Now, let’s go deeper. In this guide, I’ll walk you through how to set up a modern DevOps pipeline using GitHub or Azure DevOps, how to manage environments in PPAC, and how to automate deployments using YAML and PAC CLI. We'll also explore the latest enhancements from Microsoft and community tools that make our lives easier.

I will focus more first things first into Azure DevOps mode, but at the end I will finish with GitHub only mode for F&O ! This guide is designed for Dynamics 365 F&O developers and consultants transitioning from traditional LCS and TFVC-based workflows to modern Git and DevOps practices.

Before jumping into my article, of course I’ll highly suggest to review/read 2 very good blog/articles about this subject (and I know that there are more) - of course mine is “just” an another way to give back to the community and try to help you in 2026 in this topic :

AND THEN DURING ALL THIS ARTICLE I WILL SPOKE ABOUT YAML, CODES, SAMPLES etc… all of them are ready to download there, and I will try to push more of them in the coming weeks !

Table of Content :

Git Integration, Branching Strategy & Pull Requests for Dynamics 365 F&O

Automating Environment Management with YAML, PAC CLI & PowerShell

⚠️ Current Limitations & Expected Microsoft Enhancements (2026)

🛠️ Community Power: d365bap.tools for Advanced ALM Automation

Comprehensive Automation with MCP and GitHub Copilot Agent: The Future of X++ Development

☁️ Deploying the X++ MCP Server to Azure App Service: Enterprise-Grade Hosting

Ready to ditch LCS and embrace modern ALM? Discover how to supercharge your Dynamics 365 F&O development with GitHub, Azure DevOps, and YAML automation. From PPAC migration to advanced Git workflows and AI-powered tools like GitHub Copilot MCP—this is your blueprint for next-gen X++ DevOps.

Getting Started: Setup & Prerequisites

Before diving into pipelines and automation, let’s make sure your foundation is solid. Here are the key steps to get started with Unified ALM for Dynamics 365 F&O.

1. Create Your Azure DevOps Organization

If you’re starting from scratch, I’ve already covered the basics of creating a new Azure DevOps organization in this article: https://www.powerazure365.com/blog-1/automation-alm-power-platform#devops . It includes step-by-step guidance on setting up your first project, configuring repositories.

2. Connect Azure DevOps to Your F&O Project

As a quick reminder, DevOps has now blocked access to classic pipelines in the UI, and you can only use the new pipeline experience (YAML only) for builds and releases. Additionally, environment management is fully integrated within this modern setup, so the traditional "release" option is no longer available.

Once your organization is ready:

Create a new project in Azure DevOps.

Choose Git as your version control system (TFVC is not really deprecated for Unified ALM but as of now in 2026 I will highly recommend going forward to use only GIT).

Clone your repository locally using Visual Studio 2022 and soon – officially - 2026 😊

Install the Dynamics 365 F&O Visual Studio extension from the Visual Studio Marketplace.

Connect your Visual Studio to your Azure DevOps project using your credentials or a Personal Access Token (PAT).

3. Install Required DevOps Extensions

To enable build and release pipelines for F&O, install the following extensions in your Azure DevOps organization:

Dynamics 365 Finance and Operations Tools (especially if you are still using LCS, I will cover it a little here just in case, but I will focus more on the new PPAC era only)

Power Platform Build Tools (for the new F&O PPAC era of Unified Experiences environments and even to help you deploying Dataverse solutions in general for Power Platform components)

Azure Key Vault (optional, for managing secrets securely)

I think there are some great other extensions in the marketplace, feel free to check out :

SonarCloud (for code quality and static analysis)

WhiteSource Bolt (for open source security and compliance scanning)

Slack or Teams Integration (for automated notifications and team collaboration)

ServiceNow or JIRA DevOps (for integrating ITSM workflows with your pipelines)

Code Search (to quickly search and explore code across your projects directly from the Azure DevOps search bar)

These extensions can further enhance your build, release, and collaboration workflows, providing valuable automation, quality checks, advanced searchability, and integration with external tools. Review the Azure DevOps Marketplace regularly for new and trending extensions that fit your team's specific needs.

You can find these in the Azure DevOps Marketplace. Then at the end of article we will talk about GitHub only mode.

Additionally, you might consider setting up a dedicated GitHub repository for the technical aspects of your project, such as source code and CI/CD workflows, while leveraging Azure DevOps for project management, work item tracking, and release pipelines. This approach allows you to benefit from GitHub’s developer-centric features and community integrations, while maintaining robust organizational controls and collaboration through DevOps.

Important distinction: GitHub, Git, VSTS (now Azure DevOps), TFVC, and TFS are often confused but serve different purposes. Git is a distributed version control system, while GitHub is a cloud-based platform built around Git for hosting repositories and facilitating collaboration. Azure DevOps (formerly VSTS) provides a suite of tools for CI/CD, project tracking, and integrates with Git or TFVC. TFVC (Team Foundation Version Control) is a centralized version control system, and TFS (Team Foundation Server) is the on-premises predecessor to Azure DevOps, supporting both TFVC and Git. Choosing the right tool depends on your workflow needs: GitHub for open collaboration and Git-based source control, Azure DevOps for enterprise project management and pipelines, and TFVC/TFS for legacy or centralized version control environments.

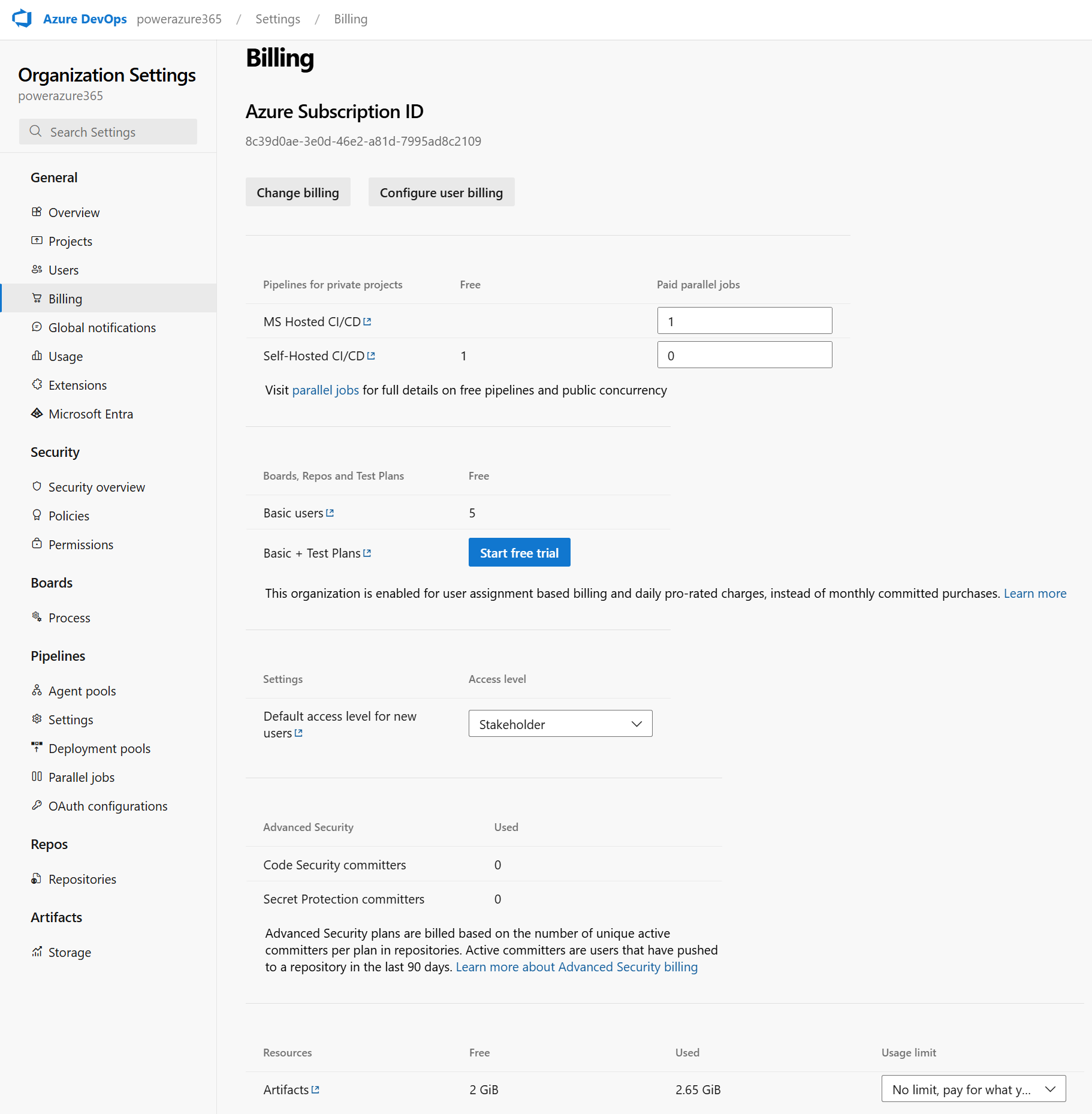

4. Paid Agents & Storage Limits

When working with build pipelines for F&O, you’ll need to consider:

Azure Artifacts Storage: By default, Azure DevOps provides 2 GB of free storage for artifacts. If you’re building large deployable packages or storing multiple versions, you may need to purchase additional storage.

In addition to Azure Artifacts storage, it's crucial to note that you will need to purchase a paid agent for your build pipelines, especially in the Unified ALM and PPAC era. The free tier now offers 1,800 minutes per month, with a new restriction of 60 minutes maximum per pipeline run. In PPAC, pipeline execution is not asynchronous, meaning you must wait for the entire run to complete and receive full logs before proceeding. Microsoft should ideally provide a more streamlined deployment experience like LCS, where you could simply launch a deployment and receive a notification upon completion.

For automated notifications, you can leverage the msprov_operationhistory table in Dataverse (by the way Microsoft: could you put this table in readonly mode for audit purpose… 😊) and quickly build a Power Automate flow to alert you when deployment is finished. Remember to utilize the Model Driven App: Finance and Operations Package Manager for managing deployable packages. Additionally, DevOps offers other OOTB notification features to keep your team informed throughout the process.

💡 Tip: Use retention policies to automatically delete old artifacts and save space.

DevOps Wiki and Effective Markdown Documentation

Azure DevOps Wiki is a powerful tool for creating, sharing, and maintaining project documentation directly within your DevOps environment. Wikis can be used to capture onboarding guides, architecture decisions, troubleshooting steps, and process documentation, making knowledge easily accessible for your team.

To create high-quality documentation, you can use markdown syntax both in Wiki pages and in README.md files. Markdown allows you to format text with headings, lists, code blocks, tables, and links, ensuring your documentation is clear and visually organized. For example, use # for headings, * or - for bullet lists, and triple backticks (```) for code snippets.

Wiki: Start by creating a Wiki in your Azure DevOps project. You can add pages, organize them hierarchically, and collaborate in real time. Use templates for consistency across documentation.

README Files: Place a README.md file at the root of your repository to provide an overview, setup instructions, and links to additional resources. This file is often the first thing new contributors see, so keep it concise and well-structured.

For best results, include screenshots, diagrams, and example commands in your Markdown files. Regularly update documentation to reflect changes in processes or technology and encourage team members to contribute improvements.

You can use Copilot also to generate it for you ☺️. Copilot can help draft initial Wiki pages or README files, suggest markdown formatting, and even automate documentation updates based on your repository changes, saving you time and ensuring consistency.

Then final important actions aka Service Connections: App Registration & Service Principal Setup

To automate deployments and interact with Power Platform or LCS environments from Azure DevOps or GitHub Actions, you need to create a secure service connection using an Azure AD App Registration and a Service Principal.

When creating an App Registration and Service Principal for Azure DevOps service connections, you need to assign specific API permissions to enable secure automation and integration. Typically, you will grant Application permissions rather than Delegated permissions, as the service principal will be acting on behalf of your automation instead of a user.

For most DevOps scenarios, including Power Platform or LCS deployments, you should add permissions such as Microsoft Graph > Application.Read.All (to allow the app to read directory data), and any additional permissions required by the target resource (for example, Dynamics CRM > user_impersonation if working with Power Platform). After adding the required permissions, be sure to click Grant admin consent so the service principal can use them without user interaction.

Always review the documentation for your specific integration to confirm which permissions are necessary and follow the principle of least privilege by only granting what is required for your automation tasks.

Quick Recap: To create an Azure App Registration, your account must have the Application Administrator or Cloud Application Administrator role in Azure Active Directory. Without these permissions, you won't have access to register applications or manage their settings. Check your role in the Azure portal to ensure you have the required access before proceeding.

1. Create an App Registration in Azure AD

Go to https://portal.azure.com > Azure Active Directory > App registrations.

Click New registration.

Name it (e.g., DevOps-PowerPlatform-SP).

Set the redirect URI to https://dev.azure.com (optional for DevOps).

Click Register.

2. Generate a Client Secret

In your App Registration, go to Certificates & secrets.

Click New client secret.

Set an expiration (e.g., 12 or 24 months).

Copy the secret value immediately—you won’t be able to retrieve it later.

3. Assign API Permissions

For Power Platform (Dataverse):

Go to API permissions > Add a permission > APIs my organization uses.

Search for PowerApps Service and Dynamics CRM.

Add delegated and/or application permissions:

user_impersonation (for Dataverse)

Environment.Read.All, Environment.Write.All, etc. (for PPAC)

For LCS (if still used):

Add permissions for Microsoft Dynamics Lifecycle Services. I think I was talking about the way to create App Registration for LCS API long time ago here: https://www.powerazure365.com/blog-1/data-alm-dynamics-365-finance-operations in the Installation part.

Note: LCS integration is being deprecated in favor of PPAC (soon or later, surely in 2027). Again, my article is more for the new generation, aka PPAC era only but if you are already live in F&O for few years, maybe you still have few LCS environments left, so better to know and use this new ALM way and just change the last-mile as you will be already quite good using this new ALM generation.

4. Assign Roles to the Service Principal

For Power Platform:

Go to PPAC : https://admin.powerplatform.microsoft.com

Select the environment > Settings > Users + permissions > Application users. (S2S Apps)

Add your App Registration as an Application User.

Assign the appropriate security role (e.g., System Administrator or custom ALM role).

For LCS:

In LCS, go to your project > Project Users

Add also a dedicated service account, without MFA (argh… yes good enhancement for PPAC here !) , as you will see that you will need when you will configure the DevOps Service Connection for LCS.

Assign the "Environment Manager" role.

5. Create the Service Connection in Azure DevOps

Go to your Azure DevOps project > Project Settings > Service connections.

Click New service connection > Choose:

Power Platform (for PPAC)

Dynamics Lifecycle Services (for LCS)

Fill in:

Tenant ID

Client ID (from App Registration created before)

Client Secret (from App Registration created before and just for the PPAC connection here)

Server URL for PPAC : here it’s the URL CRM environment of your Unified Experiences Environment, not the F&O one!

Username/Password (only for LCS mode)

LCS API endpoint here be careful if you are in the EU, US or other regions in LCS. You can double check that here : https://ariste.info/dynamics365almguide/creating-the-lcs-connection/

Name the connection (e.g., PPAC-ServiceConnection) and save. Remember well this part as you will use it in the YAML files later on !

❗ Common Pitfall:

If your pipeline fails to authenticate, double-check that:

The App Registration has the correct permissions

The client secret hasn’t expired

The service principal is added as an Application User in the environment

The environment URL is correct (e.g., https://org.crm4.dynamics.com)

Security Tip: Always store your client secrets in Azure Key Vault or GitHub Secrets. Never hardcode them in your YAML files.”

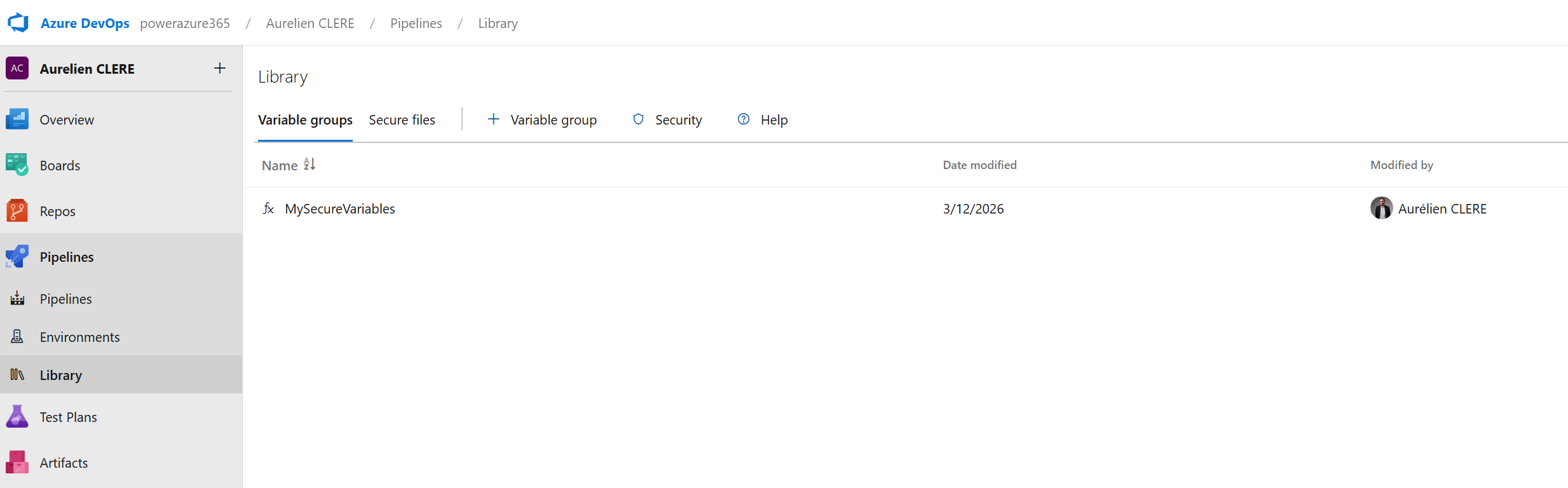

Using Variable Groups in YAML Pipelines

In Azure DevOps YAML pipelines, variable groups are a powerful feature that allows you to define and manage sets of variables centrally. These groups can be linked to one or more pipelines, making it easy to reuse configuration values like connection strings, environment names, or credentials across multiple builds and releases.

To use a variable group in your YAML pipeline, first create the group in the Azure DevOps portal and add your variables. Then, reference the group in your pipeline definition using the variables keyword, for example:

variables:

- group: MyVariableGroup

This will make all variables in MyVariableGroup available throughout your pipeline. You can access them using the $(variableName) syntax.

Storing Secrets with Azure Key Vault

For sensitive information, such as client secrets or API keys, it's best to store them securely in Azure Key Vault. Azure DevOps integrates with Key Vault so you can link a variable group directly to a Key Vault, ensuring secrets are never exposed in your pipeline code or logs.

Create an Azure Key Vault and add your secrets.

In Azure DevOps, create a variable group and link it to your Key Vault.

Reference the variable group in your YAML pipeline as shown above. Secrets from the Key Vault will be injected as pipeline variables and marked as secret, so their values are masked in logs.

This approach ensures that sensitive credentials are centrally managed, rotated easily, and never hardcoded in your YAML files—improving both security and maintainability.

If you’re using GitHub instead of Azure DevOps, you’ll use the same App Registration and store the credentials as GitHub Secrets. More on this in the GitHub section.

Let’s move on to the next section—like Git integration, branching strategy and policies, Pull Requests —and I’ll help you draft that too!

Git Integration, Branching Strategy & Pull Requests for Dynamics 365 F&O

Now that our environment and service connections are ready, let’s move into one of the most important shifts in the Unified ALM journey: moving from TFVC to Git.

Many Dynamics 365 F&O consultants and developers have historically used TFVC (Team Foundation Version Control), especially when working with Lifecycle Services (LCS) and Visual Studio. But with the Unified Developer Experience (UDE) and the move to Git-based workflows, it’s time to embrace a more modern, flexible, and collaborative approach to source control.

Let’s break it down step by step.

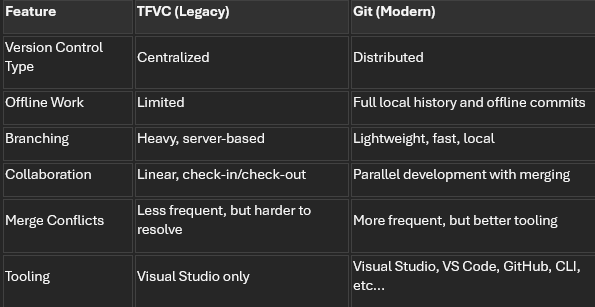

TFVC vs Git: Quick Comparison

Git is the industry standard today for a reason. It’s fast, flexible, and built for collaboration. And with GitHub and Azure DevOps both supporting Git natively, it’s the foundation of any modern ALM strategy.

Branching Strategy: What Works for Dynamics 365 F&O?

In traditional web or software projects, branching strategies like GitFlow or trunk-based development are common. But for Dynamics 365 F&O, we need to adapt these patterns to fit the realities of ERP development—longer testing cycles, multiple environments (Dev, UAT, PreProd, Prod), and strict release governance.

Here’s a recommended branching strategy tailored for F&O projects:

Main Branches

main or prod: The production-ready code. Only validated, tested, and approved code lives here.

develop: The integration branch for all new features. This is what gets deployed to UAT or PreProd.

Supporting Branches

feature/*: For new features or enhancements. Each developer creates their own feature branch.

bugfix/*: For fixing bugs found in UAT or production.

release/*: Optional. Used to prepare a release candidate from develop before merging to main.

💡 Tip: Keep branches short-lived. Merge early, merge often.

In addition to the strategies mentioned above, some teams may also benefit from adopting hotfix/* branches for urgent fixes that must be applied directly to production, or experiment/* branches for prototyping and testing ideas without impacting main development flows. Another approach is using environment-specific branches, such as dev/* or uat/*, to isolate changes intended for particular deployment stages. The choice of branching strategy should align with the team's workflow and release cadence to ensure smooth collaboration and efficient delivery.

Branch Git Policies: Enforcing Quality

To maintain code quality and avoid chaos, apply these branch policies in Azure DevOps or GitHub:

Require pull requests (PRs) to merge into develop or main (this one very important !!)

Require at least 1–2 reviewers for each PR

Enforce successful build validation before merging

Require linked work items (e.g., DevOps task or GitHub issue)

Enforce commit message formatting (optional but useful)

Disallow direct pushes to protected branches

These policies help ensure that every change is reviewed, tested, and traceable.

Pull Requests: The Heart of Collaboration

A Pull Request (PR) is how you propose changes to a shared branch (like develop or main). It’s not just about merging code—it’s about collaboration, quality, and accountability.

Here’s what happens in a typical PR workflow:

A developer finishes a feature or fix in their personal branch.

They push their changes to the remote repository.

They open a PR targeting develop (or main for hotfixes).

Reviewers (usually a Tech Lead or peer developers) review the code.

Comments, suggestions, or requested changes are addressed.

Once approved and validated by the build pipeline, the PR is merged. Here we will see after the YAML pipelines needed to have this build validation for each PR.

💡 Tip: Use PR templates to guide contributors on what to include (e.g., description, screenshots, linked work items).

Day in the Life of a Developer Using Git with UDE

Let’s walk through a typical daily workflow for a Dynamics 365 F&O developer using Git and the Unified Developer Experience:

Start your day by opening Visual Studio on your local machine, connected to your UDE (Unified Developer Environment). And surely some coffee as you will need to wait before each deployment in UDE 😊…

Pull the latest changes from the develop branch to stay up to date:

git checkout develop

git pull origin develop

Create your personal feature branch:

git checkout -b feature/SCM-1234-add-vendor-validation

(Use a naming convention like feature/SCM-1234-description where SCM-1234 is your work item ID.)

Link your branch to a work item in Azure DevOps or GitHub:

In Azure DevOps: associate your branch with a work item.

In GitHub: reference the issue number in your commit or PR (e.g., Fixes #123).

Do your development work in Visual Studio:

Modify X++ code, metadata, or models.

Build and test in your UDE.

Commit your changes:

git add .

git commit -m "SCM-1234: Added vendor validation logic to VendTable form"

Push your branch to the remote repository:

git push origin feature/SCM-1234-add-vendor-validation

Open a Pull Request:

Target: develop branch

Add reviewers (e.g., Tech Lead, peer developer)

Link the work item or issue

Add a description and screenshots if needed

Wait for review and approval:

Address feedback if any

Once approved and build passes, the PR is merged

Clean up:

git checkout develop

git pull origin develop

git branch -d feature/SCM-1234-add-vendor-validation

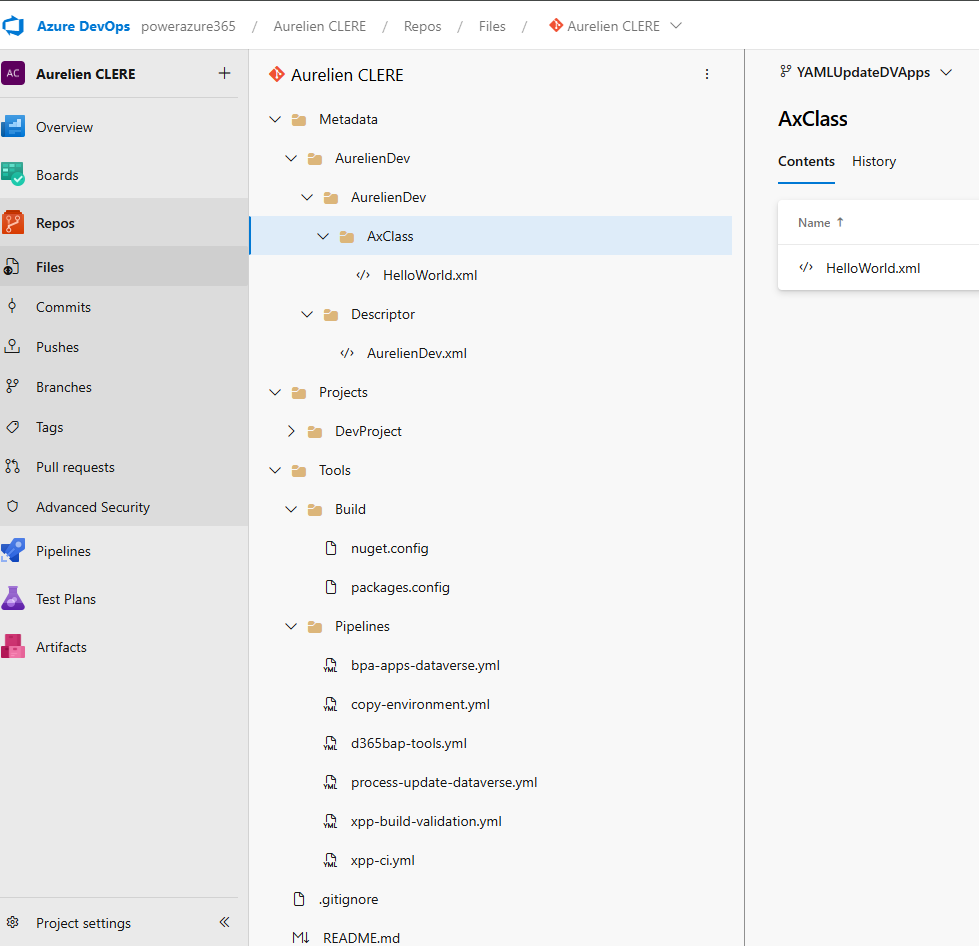

Before we dive into YAML pipelines, it's essential to understand how to properly organize your Git repository for F&O development. A well-structured repository makes automation easier, improves collaboration, and ensures your pipelines run smoothly.

My Recommended Repository Layout (but it’s mine, so… you can do other way if you like for sure 😊)

Here's the folder structure I use across my Dynamics 365 F&O projects (and what I recommend you follow):

- /Trunk (or /Main)

- ├── /Metadata

- │ ├── /YourCustomModel1

- │ │ ├── /Descriptor

- │ │ │ └── YourCustomModel1.xml

- │ │ ├── /AxClass

- │ │ ├── /AxTable

- │ │ └── /AxForm

- │ ├── /YourCustomModel2

- │ └── /ISVModels (if applicable)

- ├── /Projects

- │ ├── /AzureBuild

- │ │ ├── YourCustomModel1.rnrproj

- │ │ ├── YourCustomModel2.rnrproj

- │ │ ├── AzureBuild.sln (solution file grouping all projects)

- │ │ ├── nuget.config

- │ │ └── packages.config

- ├── /Tools

- │ ├── /Build

- │ │ ├── nuget.config (alternative location)

- │ │ └── packages.config (alternative location)

- │ └── /Pipelines

- │ ├── build-pipeline.yml

- │ ├── deploy-pipeline.yml

- │ └── pr-validation-pipeline.yml

- ├── /Scripts

- │ ├── /Deployment

- │ │ └── Pre-Post-Scripts.ps1

- │ └── /Utilities

- ├── /Licenses (optional)

- │ └── ISVLicenses.txt

- ├── .gitignore

- └── README.md

Why This Structure?

Let me explain the reasoning behind each folder:

/Metadata

This is where all your X++ source code lives. Each custom model has its own folder with:

Descriptor folder: Contains the model descriptor XML (required for build)

Element folders: AxClass, AxTable, AxForm, etc.

💡 Tip: Never manually edit files in /Metadata. Always use Visual Studio to ensure consistency.

/Projects

This contains Visual Studio projects (.rnrproj) for each package you want to build. Key points:

One project per package: You only need one project per package, even if the package contains multiple models

Solution file (.sln): Groups all your projects together and defines build order

Empty projects are OK: The project doesn't need to contain objects—it's just used to tell the compiler which package to build

/Tools/Build or /Projects/AzureBuild

This is where your nuget.config and packages.config files live. These files are critical for build pipelines (we'll cover them in detail below).

You can place them in either location—just be consistent and reference the correct path in your pipeline variables.

/Tools/Pipelines

Store your YAML pipeline definitions here. This keeps your automation code versioned alongside your source code.

/Scripts

Any PowerShell or other scripts you need for deployment, environment setup, or utilities.

Essential Files: .gitignore – but I will give you mine in my GitHub example project of all the YAML files.

Create a .gitignore file at the root to exclude unnecessary files:

######################################## # Visual Studio – User-specific files ######################################## *.user *.userosscache *.suo *.rsuser *.sln.docstates *.wsuo *.DotSettings.user ######################################## # Visual Studio – Build results ######################################## [Bb]in/ [Oo]bj/ bld/ x64/ x86/ [Ww][Ii][Nn]32/ [Aa][Rr][Mm]/ [Aa][Rr][Mm]64/ #*.dll *.exe *.pdb *.cache *.dbmdl *.ipch *.aps *.ncb *.opendb *.opensdf *.sdf *.VC.db *.VC.VC.opendb *.pidb *.tlog *.log *.tmp *.tmp_proj *.vspscc *.vssscc *.psess *.vsp *.vspx *.coverage *.coveragexml *.e2e *.sbr *.tlb *.tli *.tlh *.ilk *.meta *.obj *.iobj *.pch *.rsp *.svclog *.scc *.binlog ######################################## # Visual Studio – IDE folders ######################################## .vs/ .vscode/ .vscode/* !.vscode/settings.json !.vscode/tasks.json !.vscode/launch.json !.vscode/extensions.json !.vscode/*.code-snippets ######################################## # GitHub Copilot / AI Indexing ######################################## .vs/**/CopilotIndices/ .vs/**/CopilotIndices/* .vs/**/CopilotIndices/**/*.db .vs/**/CopilotIndices/**/*.db-shm .vs/**/CopilotIndices/**/*.db-wal CodeChunks.db* SemanticSymbols.db* ######################################## # D365FO – Build artifacts and metadata ######################################## Metadata/**/bin/ Metadata/**/Reports/ Metadata/**/Resources/ Metadata/**/WebContent/ Metadata/**/XppMetadata/ Metadata/**/XppSource/ Metadata/**/BuildModelResult.xml Metadata/**/BuildProjectResult*.xml Metadata/**/CompileLabels.xml Metadata/**/BPCheck.xml Metadata/**/Resources/**/*.resources.dll Metadata/**/Resources/**/*.delete Metadata/**/Resources/*.dll Metadata/*/*.xml Metadata/*/*.xref *.version *.rdl *.xref *.tmp *.log # Keep label resources !Metadata/**/LabelResources/ #GIT ignore exemptions if needed !Metadata/**/bin//MyISVDLLExample.dll

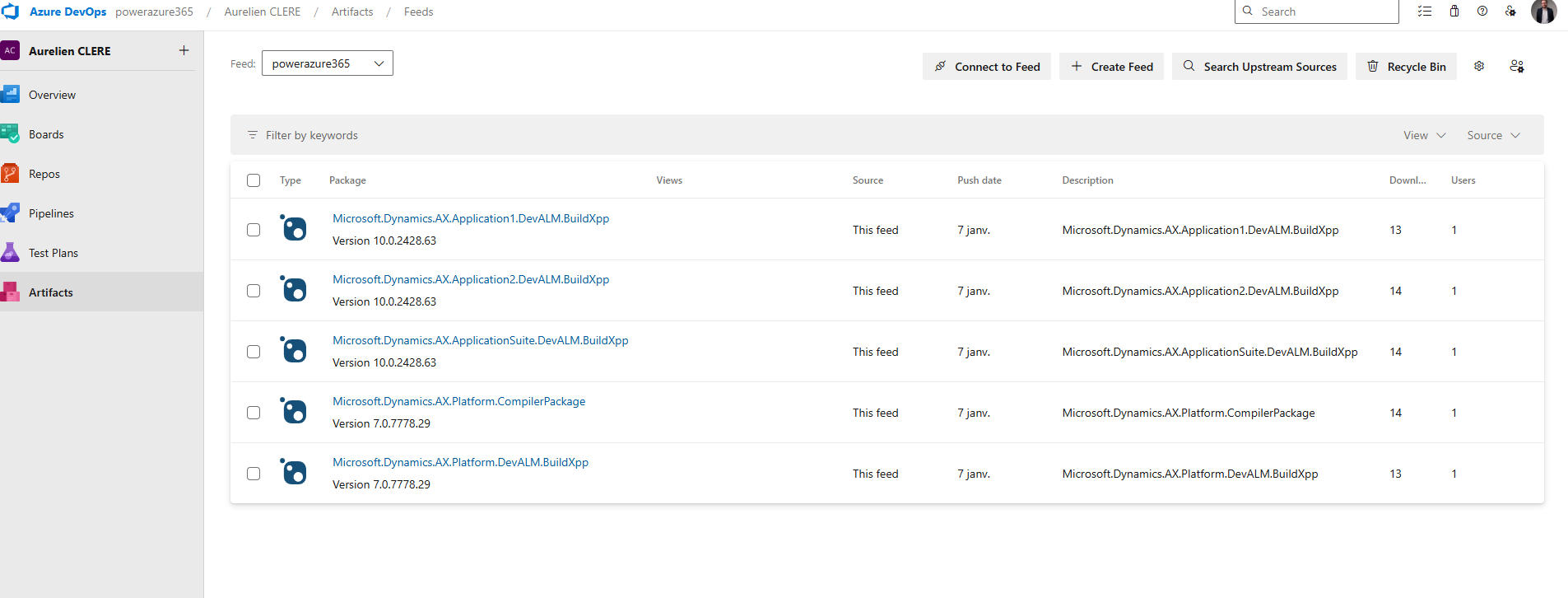

Azure DevOps Artifacts & NuGet Packages for F&O

Now let's cover one of the most critical parts of F&O build automation: managing NuGet packages. To build F&O packages without a full build VM, we rely on NuGet packages that contain the compiler and reference binaries. Let’s see how to set this up.

What Are These NuGet Packages?

To build X++ code without a full Build VM, you need compiler tools and reference binaries distributed as NuGet packages. Microsoft provides these in LCS Shared Asset Library (we will see how and when Microsoft will change that with PAC CLI via a semi-public feed that will replace all this part) :

Microsoft.Dynamics.AX.Platform.CompilerPackage (~200 MB)

Contains xppc.exe (X++ compiler) and build tools

Name in LCS: PUXX/10.X.XX – Compiler Tools

Microsoft.Dynamics.AX.Platform.DevALM.BuildXpp (~150 MB)

Compiled Platform code (optimized for building)

Name in LCS: PUXX/10.X.XX – Platform Build Reference

Microsoft.Dynamics.AX.Application1.DevALM.BuildXpp (~80 MB)

Application 1 compiled code

Name in LCS: PUXX/10.X.XX – Application 1 Build Reference

Microsoft.Dynamics.AX.Application2.DevALM.BuildXpp (~80 MB)

Application 2 compiled code

Name in LCS: PUXX/10.X.XX – Application 2 Build Reference

Microsoft.Dynamics.AX.ApplicationSuite.DevALM.BuildXpp (~150 MB)

Application Suite compiled code

Name in LCS: PUXX/10.X.XX – Application Suite Build Reference

Important: Download NuGet packages that exactly match the version of your target Finance & Operations environment, or an earlier version—never a newer one. For example, if your environment is on 10.0.46, use NuGet packages for 10.0.46 or an earlier compatible version. In addition, it is recommended to always download the General Availability (GA) version, rather than every release Microsoft provides for each Platform Quality Update (PQU). Please also take care to update it as soon as possible when you are updating your F&O versions on your environment.

Step-by-Step: Setting Up Azure Artifacts Feed

1. Create an Artifacts Feed

Go to your Azure DevOps project

Navigate to Artifacts > Create Feed

Name it (e.g., Dynamics365FO or D365-NuGet-Feed)

Visibility: Keep it private to your organization

Storage note: You get 2 GB free storage. The 5 NuGet packages total ~660 MB, so you should be fine. Use retention policies to clean up old versions.

2. Download NuGet.exe

Download from https://www.nuget.org/downloads

Save it to a local folder (e.g., C:\D365Build\)

Optionally, add it to your Windows PATH

Alternative: Use dotnet nuget commands if you have .NET SDK installed.

3. Install Credential Provider

Run this PowerShell command to install the Azure Artifacts credential provider:

Via PowerShell

iex "& { $(irm https://aka.ms/install-artifacts-credprovider.ps1) }"

If it keeps asking for credentials, try:

Via PowerShell

iex "& { $(irm https://aka.ms/install-artifacts-credprovider.ps1) } -AddNetfx"

4. Create nuget.config

In Azure DevOps, click Connect to feed > Select nuget.exe

Copy the XML content shown

Create a file named nuget.config in your local folder

Example content:

XML

<?xml version="1.0" encoding="utf-8"?>

<configuration>

<packageSources>

<clear />

<add key="Dynamics365FO" value="https://pkgs.dev.azure.com/YourOrg/YourProject/_packaging/Dynamics365FO/nuget/v3/index.json" />

</packageSources>

</configuration>

The feed URL must match exactly what's shown in your "Connect to feed" page.

5. Create packages.config

Create a packages.config file listing all NuGet packages and their versions:

XML

<?xml version="1.0" encoding="utf-8"?>

<packages>

<package id="Microsoft.Dynamics.AX.Platform.CompilerPackage" version="7.0.7521.60" targetFramework="net40" />

<package id="Microsoft.Dynamics.AX.Platform.DevALM.BuildXpp" version="7.0.7521.60" targetFramework="net40" />

<package id="Microsoft.Dynamics.AX.Application1.DevALM.BuildXpp" version="10.0.2177.37" targetFramework="net40" />

<package id="Microsoft.Dynamics.AX.Application2.DevALM.BuildXpp" version="10.0.2177.37" targetFramework="net40" />

<package id="Microsoft.Dynamics.AX.ApplicationSuite.DevALM.BuildXpp" version="10.0.2177.37" targetFramework="net40" />

</packages>

💡 How to get version numbers: Right-click the .nupkg file > Properties > Details tab. Or extract the package and look at the .nuspec file inside.

6. Upload NuGet Packages to Azure Artifacts

Now push each package to your feed with Powershell :

# Navigate to folder with NuGet packages

cd C:\D365Build\

# Push each package (update feed name and paths)

nuget.exe push -Source "Dynamics365FO" -ApiKey az Microsoft.Dynamics.AX.Platform.CompilerPackage.7.0.7521.60.nupkg

nuget.exe push -Source "Dynamics365FO" -ApiKey az Microsoft.Dynamics.AX.Platform.DevALM.BuildXpp.7.0.7521.60.nupkg

nuget.exe push -Source "Dynamics365FO" -ApiKey az Microsoft.Dynamics.AX.Application1.DevALM.BuildXpp.10.0.2177.37.nupkg

nuget.exe push -Source "Dynamics365FO" -ApiKey az Microsoft.Dynamics.AX.Application2.DevALM.BuildXpp.10.0.2177.37.nupkg

nuget.exe push -Source "Dynamics365FO" -ApiKey az Microsoft.Dynamics.AX.ApplicationSuite.DevALM.BuildXpp.10.0.2177.37.nupkg

If you have a slow or unreliable internet connection, you may encounter timeout errors during the NuGet push process. By default, the timeout is set to 5 minutes, but you can increase it by adding the -Timeout parameter to your command. For example:

nuget.exe push -Source "Dynamics365FO" -ApiKey az -Timeout 1200 Microsoft.Dynamics.AX.Platform.CompilerPackage.7.0.7521.60.nupkg

In this example, -Timeout 1200 sets the timeout to 20 minutes (1200 seconds). Adjust this value as needed to accommodate your connection speed.

7. Commit nuget.config and packages.config to Git

Once uploaded, commit these two files to your repository:

git add Projects/AzureBuild/nuget.config

git add Projects/AzureBuild/packages.config

git commit -m "Add NuGet configuration for build pipeline"

git push origin develop

These files tell your build pipeline:

nuget.config: Where to find the NuGet feed

packages.config: Which packages and versions to restore

Tips & Best Practices

One nuget.config per repository

You don't need a separate file for each project. Use one centralized location and reference it via variables in your pipeline.Update packages regularly

When Microsoft releases new quality updates, download the new NuGet packages and upload them. Update the version numbers in packages.config.Use feed views for stability

Create a @Release view in your Artifacts feed and promote stable package versions to it. Reference this view in production pipelines.Storage management

If you hit the 2 GB limit, delete old package versions you're no longer using:Artifacts > Select package > Versions > Delete old versions

⚠️ Common Pitfall:

If your build fails with "Unable to find package", double-check:

Feed URL in nuget.config is correct

Package versions in packages.config match what's in your feed

Your build agent has access to the feed (service connection or PAT)

Now that we have:

A clean Git repository structure

Visual Studio projects for each package

NuGet packages uploaded to Azure Artifacts

nuget.config and packages.config in source control

This Git-based workflow brings structure, traceability, and collaboration to your Dynamics 365 F&O development process. It may feel different from TFVC at first, but once you get used to it, you’ll never want to go back!

Next up, we’ll dive into YAML pipelines and how to automate your build and release process for F&O and Dataverse using PAC CLI and Unified Packages.

Let’s keep going!

YAML Pipelines, Environments & Workflow Approvals

Now that we've covered Git, branching, and repository structure, let's dive into the heart of automation: YAML pipelines. This is where all your DevOps magic happens—compiling X++ code, creating deployable packages, and deploying them to UAT and Production environments with proper governance and approvals. (By the way, you have good YAML online editor on the web) to help building it and with the right indentation)

What Are Pipelines?

A pipeline is a series of automated steps that build, test, and deploy your code. Think of it as a conveyor belt in a factory: code goes in at one end, and a production-ready deployable package comes out at the other end.

In Azure DevOps, pipelines can be defined in two ways:

Classic Pipelines (GUI-based) — deprecated and not recommended

YAML Pipelines (code-based) — modern, version-controlled, and recommended

Why YAML?

Your pipeline is stored in Git alongside your code

Changes to the pipeline go through Pull Requests and code review

Pipelines are portable and reusable across projects

Full transparency and traceability

Pipeline Organization Strategy

For a Dynamics 365 F&O project, I recommend organizing your pipelines into three separate YAML files, each with a specific purpose:

· xpp-build-validation.yml

o Purpose: Validate Pull Requests. Runs on every PR to ensure code compiles successfully before merging.

o Trigger: Manual or PR only. Does NOT deploy to any environment.

· xpp-ci-uatonly.yml

o Purpose: Continuous Integration to UAT. Builds code and deploys only to UAT environment.

o Trigger: Scheduled (e.g., daily at 2 AM) or manual.

· xpp-ci.yml

o Purpose: Full CI/CD pipeline. Builds code, deploys to UAT, then to Production after approval.

o Trigger: Scheduled or manual. Requires approval before Production deployment.

This separation keeps your workflows clean and gives you flexibility. Let's break down each pipeline step-by-step. Of course, it’s just an example for you, you can adapt based on your own needs 😊

Pipeline Anatomy: Step-by-Step Breakdown

I'll use xpp-ci.yml as the main example since it contains all the components (build + UAT + Production). The other pipelines follow the same structure but with fewer stages.

Pipeline Header & Triggers

YAML

name: $(Date:yy.MM.dd)$(Rev:.r)

trigger:

- none

What this does:

name: Defines the build number format. Here it's YY.MM.DD.revision (e.g., 26.03.06.1). This becomes your model version.

trigger: none: Disables automatic triggers on commits. The pipeline only runs manually or on schedule.

💡 Why disable triggers? For F&O projects with long build times (10–20 minutes, sometimes even more based on the number of extensions and models), you don't want every commit triggering a build. Instead, use Pull Request validation or scheduled builds.

Scheduled Builds

YAML

schedules:

- cron: "0 2 * * *" # 2:00 AM every day

displayName: Daily Build at 02:00 UTC

branches:

include:

- main

always: false

What this does:

Runs the pipeline daily at 2:00 AM UTC

Only if there have been changes to the main branch since the last build (always: false)

💡 Tip: Schedule builds during off-peak hours to avoid competing for resources.

Global Pool & Variables

YAML

pool:

vmImage: 'windows-latest'

variables:

App1Package: 'Microsoft.Dynamics.AX.Application1.DevALM.BuildXpp'

App2Package: 'Microsoft.Dynamics.AX.Application2.DevALM.BuildXpp'

AppSuitePackage: 'Microsoft.Dynamics.AX.ApplicationSuite.DevALM.BuildXpp'

PlatPackage: 'Microsoft.Dynamics.AX.Platform.DevALM.BuildXpp'

ToolsPackage: 'Microsoft.Dynamics.AX.Platform.CompilerPackage'

MetadataPath: '$(Build.SourcesDirectory)\Metadata'

NugetConfigsPath: '$(Build.SourcesDirectory)\Tools\Build'

NugetsPath: '$(Pipeline.Workspace)\NuGets'

What this does:

pool: Specifies the build agent. windows-latest is a Microsoft-hosted agent with Windows Server and Visual Studio pre-installed.

variables: Defines reusable values throughout the pipeline. Notice the NuGet package names match what we uploaded to Azure Artifacts.

Stage 1: Build X++ Code & Create Deployable Package

Now we get to the meat of the pipeline—the build stage.

YAML

stages:

- stage: Build

displayName: X++ Build & Package

jobs:

- job: BuildXpp

displayName: Build solution and create deployable package

steps:

A stage is a logical grouping of jobs. A job is a series of steps that run sequentially on the same agent.

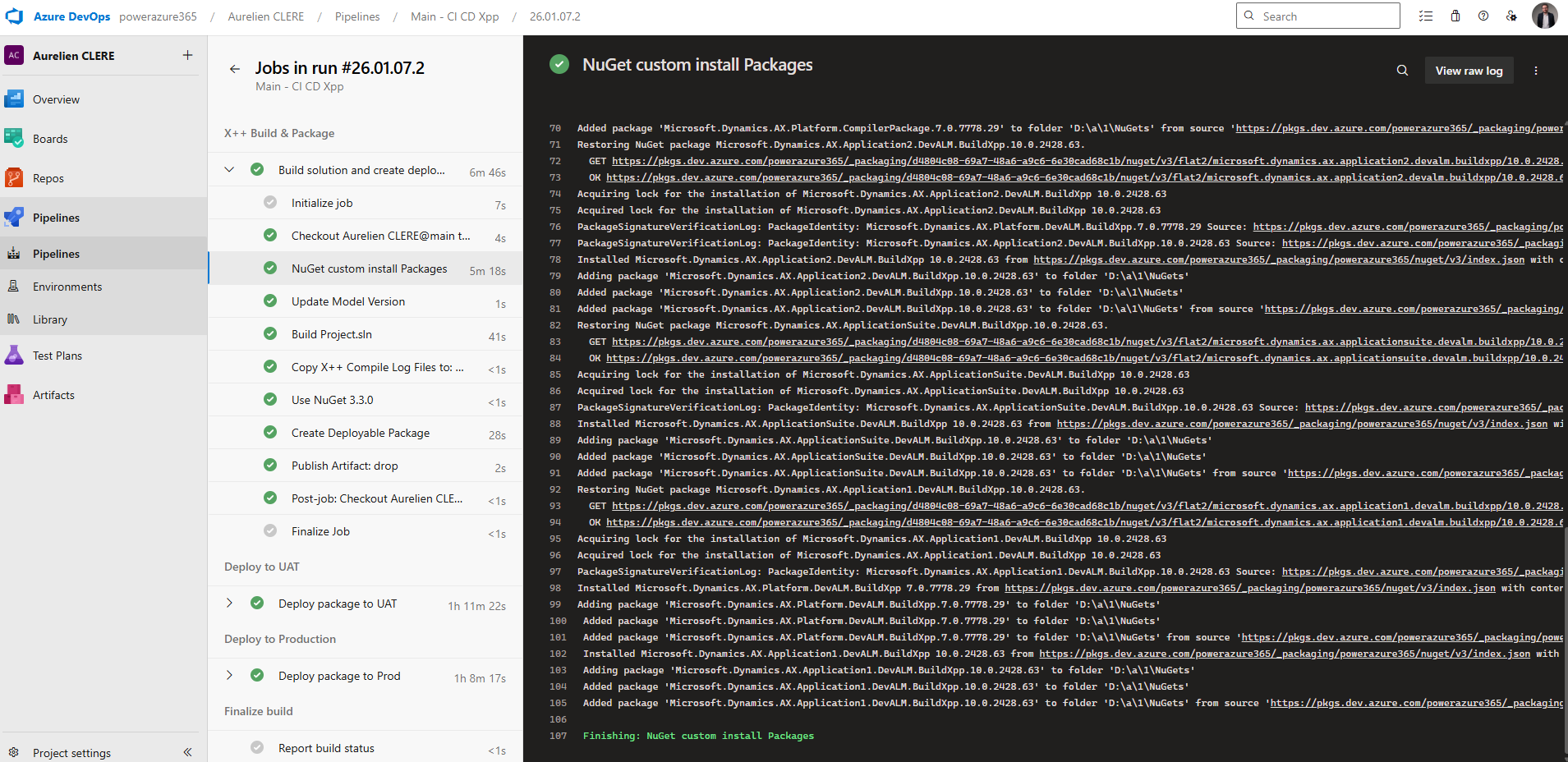

Step 1: Restore NuGet Packages

YAML

- task: NuGetCommand@2

displayName: 'NuGet custom install Packages'

inputs:

command: custom

arguments: 'install -Noninteractive $(NugetConfigsPath)\packages.config

-ConfigFile $(NugetConfigsPath)\nuget.config -Verbosity Detailed

-ExcludeVersion -OutputDirectory "$(NugetsPath)"'

What this does:

Downloads the 5 NuGet packages we uploaded to Azure Artifacts earlier

Uses packages.config to know which packages and versions to download

Uses nuget.config to know where the feed is located

-ExcludeVersion: Critical! This removes version numbers from folder names, so paths like $(NugetsPath)\$(ToolsPackage) work consistently

Example output:

NuGets/

├── Microsoft.Dynamics.AX.Platform.CompilerPackage/

├── Microsoft.Dynamics.AX.Platform.DevALM.BuildXpp/

├── Microsoft.Dynamics.AX.Application1.DevALM.BuildXpp/

├── Microsoft.Dynamics.AX.Application2.DevALM.BuildXpp/

└── Microsoft.Dynamics.AX.ApplicationSuite.DevALM.BuildXpp/

Step 2: Update Model Version

YAML

- task: XppUpdateModelVersion@0

displayName: 'Update Model Version'

inputs:

XppSourcePath: '$(MetadataPath)'

VersionNumber: '$(Build.BuildNumber)'

XppLayer: 8

What this does:

Updates the version number in your model descriptor XML files

VersionNumber: Uses the build number (e.g., 26.03.06.1)

XppLayer: 8: Represents ISV layer (use 7 for VAR, 6 for CUS)

Why update the version? This ensures every deployable package has a unique, traceable version number. It's critical for rollback and auditing. Again here you can change from what you need !

Step 3: Copy Binary Dependencies

YAML

- task: CopyFiles@2

displayName: 'Copy Binary Dependencies to: $(Build.BinariesDirectory)'

inputs:

SourceFolder: '$(MetadataPath)'

Contents: '**/bin/**'

TargetFolder: '$(Build.BinariesDirectory)'

What this does:

Copies any existing compiled DLLs from /Metadata/**/bin/ folders to the build output directory

Required if you have ISV or binary dependencies that aren't recompiled

Step 4: Build the Solution with MSBuild

This is the most complex step—compiling X++ code.

YAML

- task: VSBuild@1

displayName: 'Build Project.sln'

inputs:

solution: 'Projects/BuildProject/BuildProject.sln'

vsVersion: '17.0'

msbuildArgs: >

/p:BuildTasksDirectory="$(NugetsPath)\$(ToolsPackage)\DevAlm"

/p:MetadataDirectory="$(MetadataPath)"

/p:FrameworkDirectory="$(NuGetsPath)\$(ToolsPackage)"

/p:ReferenceFolder="$(NuGetsPath)\$(PlatPackage)\ref\net40;$(NuGetsPath)\$(App1Package)\ref\net40;$(NuGetsPath)\$(App2Package)\ref\net40;$(NuGetsPath)\$(AppSuitePackage)\ref\net40;$(MetadataPath);$(Build.BinariesDirectory)"

/p:ReferencePath="$(NuGetsPath)\$(ToolsPackage)"

/p:OutputDirectory="$(Build.BinariesDirectory)"

What this does:

solution: Points to your Visual Studio solution file

vsVersion: 17.0: Uses Visual Studio 2022 (MSBuild 17)

msbuildArgs: Passes critical parameters to the X++ compiler:

BuildTasksDirectory: Where MSBuild can find X++ build tasks

MetadataDirectory: Your source code location

ReferenceFolder: Semi-colon separated list of paths containing reference DLLs (Platform, App1, App2, AppSuite, your metadata, and binaries)

OutputDirectory: Where compiled DLLs are written

💡 Key insight: The ReferenceFolder is what allows the compiler to resolve dependencies without a full Build VM. It points to the ref\net40 folders inside your NuGet packages.

Step 5: Copy Compiler Log Files

YAML

- task: CopyFiles@2

displayName: 'Copy X++ Compile Log Files to: $(Build.ArtifactStagingDirectory)\Logs'

inputs:

SourceFolder: '$(Build.SourcesDirectory)'

Contents: |

**\Dynamics.AX.*.xppc.*

**\Dynamics.AX.*.labelc.*

**\Dynamics.AX.*.reportsc.*

TargetFolder: '$(Build.ArtifactStagingDirectory)\Logs'

condition: succeededOrFailed()

What this does:

Copies compilation log files (errors, warnings, best practices) to the artifact staging area

condition: succeededOrFailed(): Runs even if the build fails, so you can review logs

Step 6: Install Older NuGet Version

YAML

- task: NuGetToolInstaller@0

displayName: 'Use NuGet 3.3.0'

inputs:

versionSpec: 3.3.0

What this does:

Installs NuGet 3.3.0 specifically

Why? The XppCreatePackage task requires NuGet < 3.4.0 due to legacy packaging format

Step 7: Create Deployable Package

This is the critical step that creates your F&O deployable package.

YAML

- task: XppCreatePackage@2

displayName: Create Deployable Package

inputs:

XppToolsPath: '$(NuGetsPath)\$(ToolsPackage)'

XppBinariesPath: '$(Build.BinariesDirectory)'

CreateCloudPackage: true

CloudPackagePlatVersion: '7.0.7778.29'

CloudPackageAppVersion: '10.0.2428.63'

CloudPackageOutputLocation: '$(Build.ArtifactStagingDirectory)\CloudDeployablePackage_$(Build.BuildNumber)'

CreateRegularPackage: true

DeployablePackagePath: '$(Build.ArtifactStagingDirectory)\AXDeployableRuntime_$(Build.BuildNumber).zip'

What this does:

XppCreatePackage@2: Version 2 supports both legacy (LCS) and unified (PPAC) package formats

XppToolsPath: Points to the Compiler Tools package

XppBinariesPath: Where the compiled DLLs are located

CreateCloudPackage: true: Creates the Unified Package for PPAC deployment

CloudPackagePlatVersion: Platform version (must match your environment or at least LESS the target environment)

CloudPackageAppVersion: Application version

CloudPackageOutputLocation: Output folder for the unified package

CreateRegularPackage: true: Creates the legacy LCS package (.zip file)

DeployablePackagePath: Output path for the LCS package

💡 Pro tip: You can create both package formats simultaneously. This gives you flexibility to deploy via LCS (legacy) or PPAC (modern).

Output artifacts:

Build.ArtifactStagingDirectory/

├── CloudDeployablePackage_26.03.06.1/

│ └── TemplatePackage.dll (Unified package for PPAC)

├── AXDeployableRuntime_26.03.06.1.zip (LCS package)

└── Logs/

├── Dynamics.AX.YourModel.xppc.log

└── ...

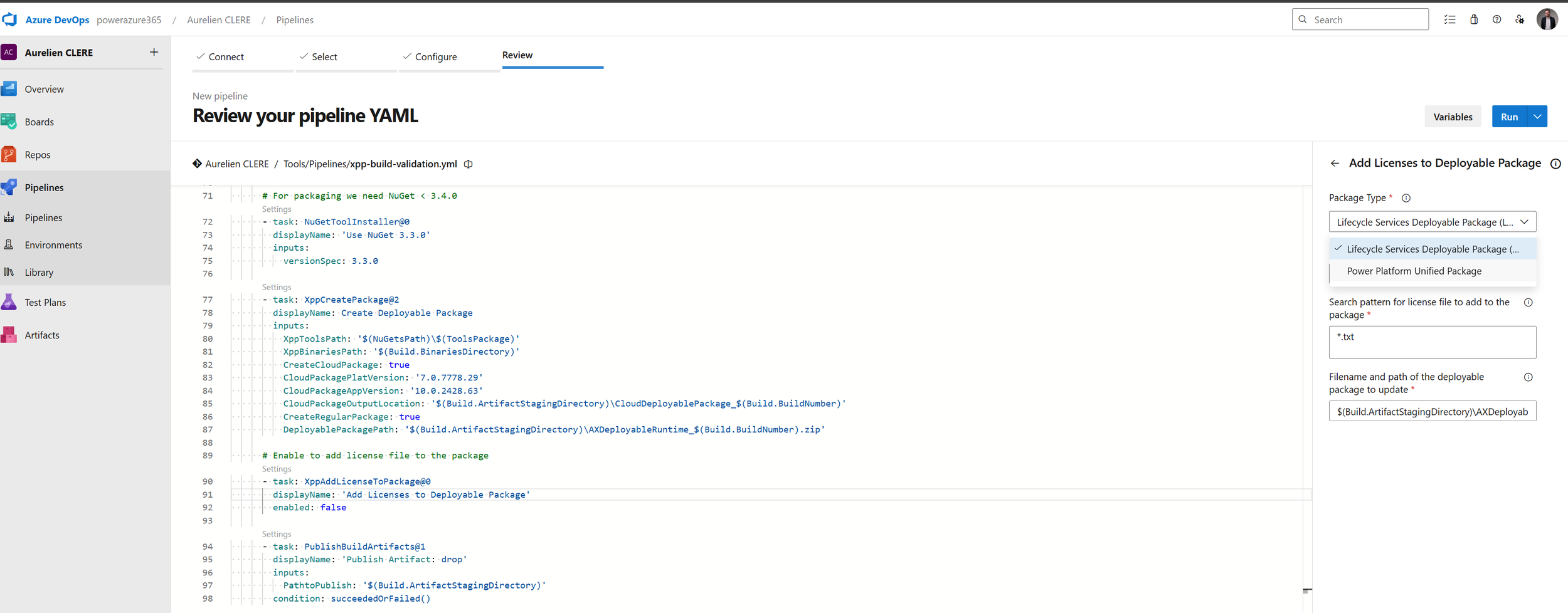

Step 8: Add License Files (Optional)

YAML

- task: XppAddLicenseToPackage@0

displayName: 'Add Licenses to Deployable Package'

enabled: false

What this does:

Adds ISV license files to the deployable package

enabled: false: Currently disabled. Set to true if you have ISV licenses to include

Some tips here, you can use the Edit button on the pipeline that can help you building your YAML files, if you don’t know or want to change.

Reminder to pay attention to indentation and use the validator step is also important, as proper formatting can prevent errors.

For ISV here if you have, depends on the package you want to change (DP or UDP) :

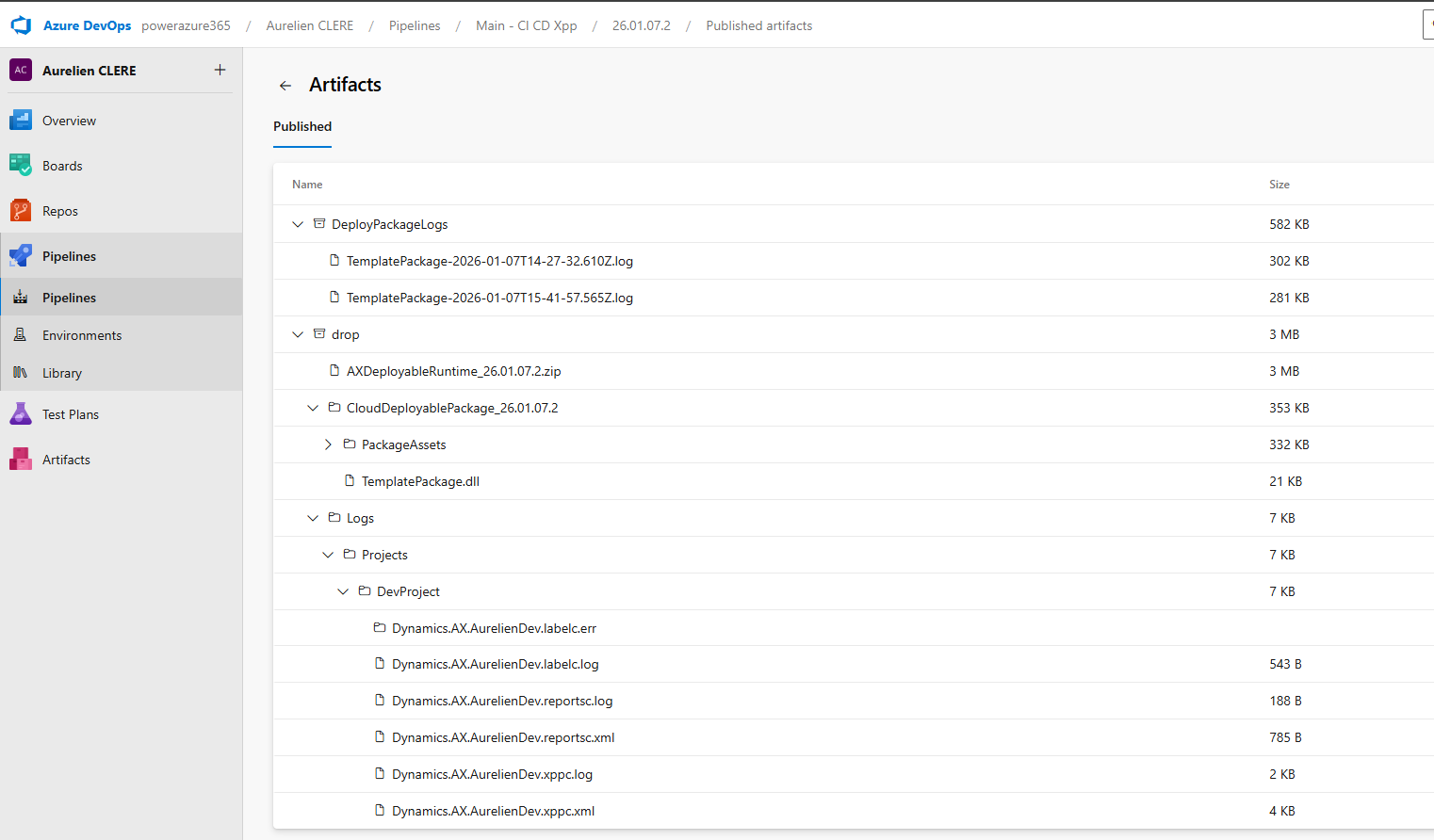

Step 9: Publish Artifacts

YAML

- task: PublishBuildArtifacts@1

displayName: 'Publish Artifact: drop'

inputs:

PathtoPublish: '$(Build.ArtifactStagingDirectory)'

condition: succeededOrFailed()

What this does:

Publishes everything in the staging directory as a pipeline artifact named drop

condition: succeededOrFailed(): Publishes even if the build failed (so you can see logs)

These artifacts become available to downstream deployment stages.

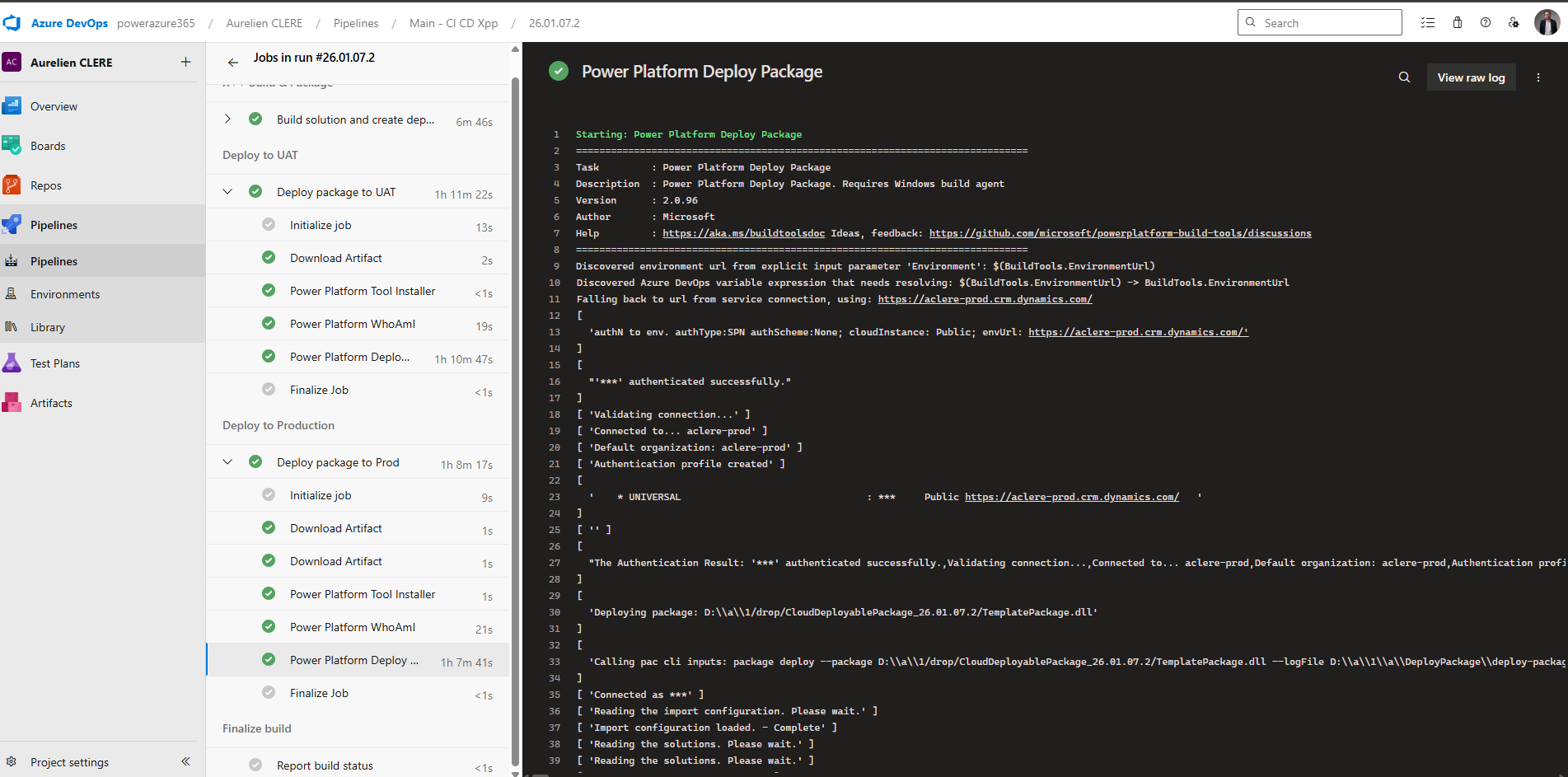

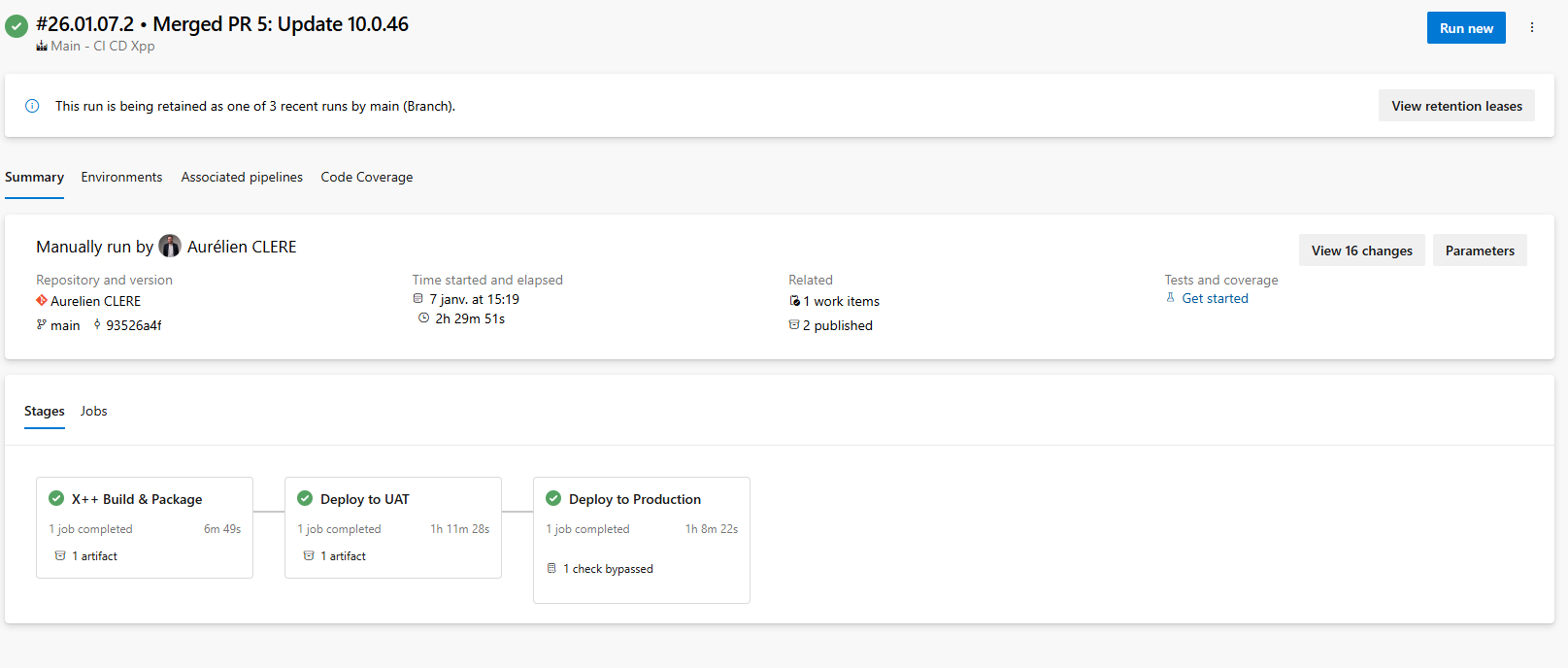

Stage 2: Deploy to UAT Environment

Now let's deploy our freshly built package to the UAT environment.

YAML

- stage: DeployUAT

displayName: Deploy to UAT

dependsOn: Build

condition: succeeded()

jobs:

- deployment: DeployToUAT

timeoutInMinutes: 360

pool:

vmImage: 'windows-latest'

displayName: Deploy package to UAT

environment: 'D365-UAT'

strategy:

runOnce:

deploy:

steps:

Key concepts:

stage: DeployUAT: A separate stage from Build

dependsOn: Build: This stage only runs after the Build stage completes

condition: succeeded(): Only runs if Build succeeded

deployment job: Special job type for deployments (different from regular jobs)

timeoutInMinutes: 360: 6-hour timeout (F&O deployments can take 1–3 hours)

environment: 'D365-UAT': Links to an Azure DevOps Environment (more on this below, just after don’t worry ! 😊)

Deployment Steps: Power Platform Tasks

YAML

steps:

- task: PowerPlatformToolInstaller@2

displayName: 'Power Platform Tool Installer'

- task: PowerPlatformWhoAmI@2

displayName: 'Power Platform WhoAmI'

inputs:

authenticationType: PowerPlatformSPN

PowerPlatformSPN: 'USE'

- task: PowerPlatformDeployPackage@2

displayName: 'Power Platform Deploy Package'

inputs:

authenticationType: PowerPlatformSPN

PowerPlatformSPN: 'USE'

PackageFile: '$(Pipeline.Workspace)/drop/CloudDeployablePackage_$(Build.BuildNumber)/TemplatePackage.dll'

Step-by-step:

PowerPlatformToolInstaller@2: Installs PAC CLI on the build agent

PowerPlatformWhoAmI@2: Authenticates and verifies connection [

PowerPlatformSPN: 'USE': References the service connection named USE (UAT Service Environment) that you created earlier at the very very beginning of this article !

PowerPlatformDeployPackage@2: Deploys the unified package

PackageFile: Points to TemplatePackage.dll in the unified package folder

This is the new PPAC deployment method (no LCS involved!)

💡 Important: The service connection USE must be configured with the correct App Registration, Client Secret, and target environment URL.

Stage 3: Deploy to Production

The Production stage is nearly identical to UAT, but with a different environment and service connection.

YAML

- stage: DeployProd

displayName: Deploy to Production

dependsOn: DeployUAT

condition: succeeded()

jobs:

- deployment: DeployToProd

timeoutInMinutes: 360

pool:

vmImage: 'windows-latest'

displayName: Deploy package to Prod

environment: 'D365-Prod'

strategy:

runOnce:

deploy:

steps:

- task: PowerPlatformToolInstaller@2

displayName: 'Power Platform Tool Installer'

- task: PowerPlatformWhoAmI@2

displayName: 'Power Platform WhoAmI'

inputs:

authenticationType: 'PowerPlatformSPN'

PowerPlatformSPN: 'UPE'

- task: PowerPlatformDeployPackage@2

displayName: 'Power Platform Deploy Package'

inputs:

authenticationType: 'PowerPlatformSPN'

PowerPlatformSPN: 'UPE'

PackageFile: '$(Pipeline.Workspace)/drop/CloudDeployablePackage_$(Build.BuildNumber)/TemplatePackage.dll'

Key differences:

dependsOn: DeployUAT: Runs only after UAT deployment succeeds

environment: 'D365-Prod': Different environment (with stricter approval policies)

PowerPlatformSPN: 'UPE': Different service connection for Production

Just in case you are still in LCS way before jumping into the environments part in DevOps. Again I’ll drop them directly in my GitHub project.

Here’s the cleaned-up deployment stage from your LCS-only YAML pipeline :

# ---------- Stage 2: Deploy UAT (LCS) ---------- - stage: UploadAndDeploy displayName: 'Upload to LCS and Deploy via LCS' dependsOn: Build condition: succeeded() jobs: - deployment: DeployToUAT displayName: 'Upload and Deploy to UAT' environment: 'UAT' # Replace with your Azure DevOps environment name strategy: runOnce: deploy: steps: - task: DownloadPipelineArtifact@2 displayName: 'Download Build Artifacts' inputs: buildType: 'current' artifactName: 'drop' targetPath: '$(Pipeline.Workspace)' - task: InstallMSALModule@1 - task: LCSAssetUpload@2 name: UploadUAT displayName: 'Upload to LCS - UAT' inputs: serviceConnectionName: 'LCS' # Replace with your LCS service connection name projectId: 'YOUR_LCS_PROJECT_ID' assetType: '10' # 10 = Deployable Package assetPath: '$(Pipeline.Workspace)/AXDeployableRuntime_$(Build.BuildNumber).zip' assetName: 'UAT Branch $(Build.BuildNumber)' assetDescription: 'Release_$(Build.BuildNumber)' - task: LCSAssetDeploy@4 displayName: 'Deploy via LCS UAT' inputs: serviceConnectionName: 'LCS' projectId: 'YOUR_LCS_PROJECT_ID' environmentId: 'YOUR_LCS_ENVIRONMENT_ID' fileAssetId: '$(UploadUAT.FileAssetId)' deploymentType: 'hq' releaseName: 'UAT Branch $(Build.BuildNumber)' waitForCompletion: false

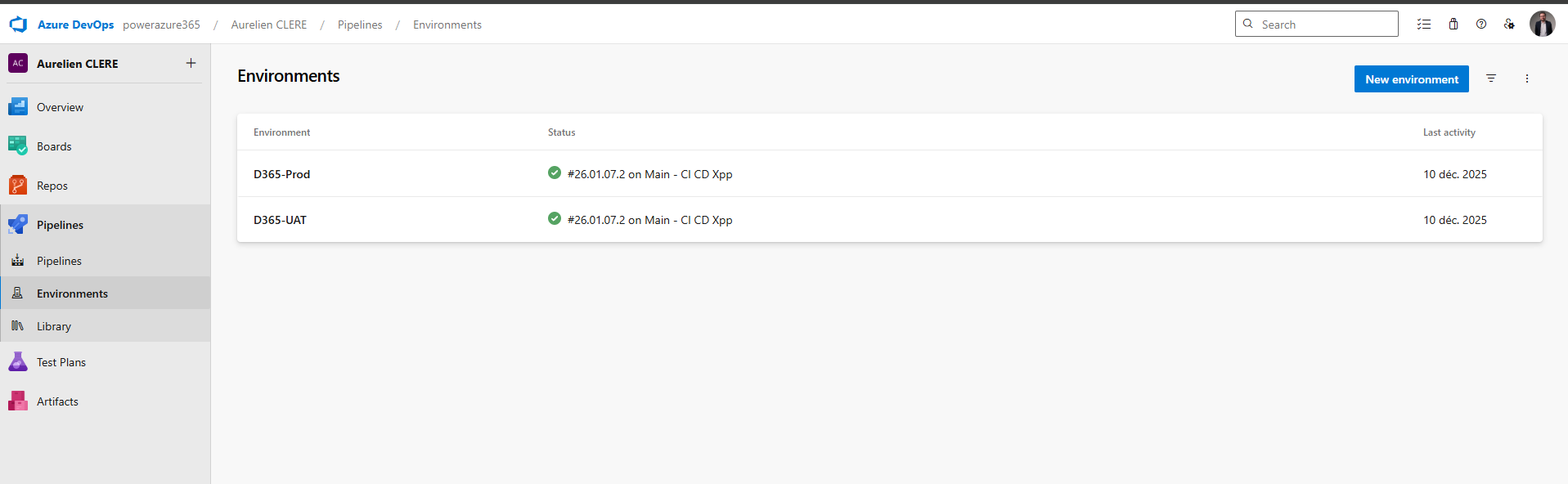

Environments, Approvals & Policies

Now let's talk about Environments—the secret sauce that gives you governance and control over deployments.

What Is an Environment in Azure DevOps?

An Environment is a logical target for deployment (e.g., UAT, Production). It's NOT the same as your Dynamics 365 environment—it's a DevOps construct that lets you:

Require manual approvals before deployment

Restrict which branches can deploy

Enforce business hours for deployments

Track deployment history

Define multiple approvers with voting rules

Creating an Environment

Go to Pipelines > Environments > New environment

Name it (e.g., D365-UAT, D365-Prod)

Description: "UAT Environment for Dynamics 365 F&O"

Click Create

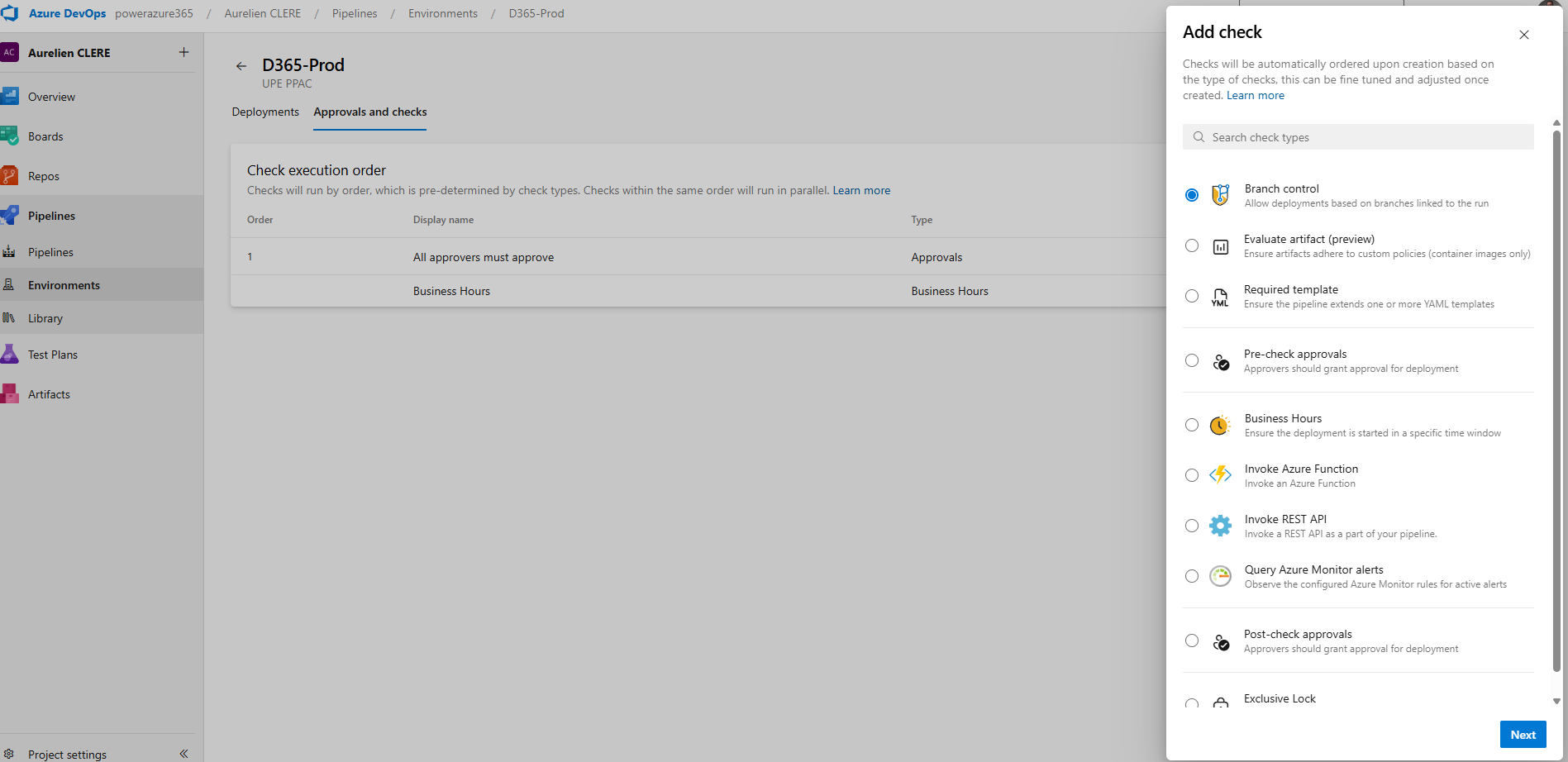

Configuring Approval Workflows

Once your environment is created, click on it and add Approvals and checks:

1. Approvals

Click Approvals and checks > Approvals

Add approvers (e.g., Tech Lead, Product Owner, Change Manager, Release Manager etc…)

Configure:

Minimum number of approvers: At least 1 (or 2 for Production)

Allow requestors to approve: Usually No for Production

Timeout: 30 days (how long approval waits before expiring)

Instructions: Add notes for approvers (e.g., "Review deployment checklist before approving")

What happens: When the pipeline reaches the deployment stage, it pauses and sends a notification to approvers. They review and either approve or reject.

2. Branch Control

Click Approvals and checks > Branch control

Specify allowed branches (e.g., only main or release/*)

What happens: Deployments are only allowed from specific branches. Prevents accidental Production deployments from feature branches.

3. Business Hours

Click Approvals and checks > Business hours

Define deployment windows (e.g., Monday–Friday, 9 AM–5 PM, timezone-aware)

What happens: Deployments are blocked outside of business hours. Great for avoiding Friday evening Production deployments!

4. Required Template

Click Approvals and checks > Required template

Specify a YAML template that must be used for deployments

What happens: Enforces consistency across all deployments (e.g., all Production deployments must include a specific notification task).

Example Environment Setup

Scheduling Deployments

An essential aspect of deployment management is the ability for a release manager to schedule when a deployment actually occurs. Instead of triggering the deployment immediately upon approval, the release manager can choose a specific future date and time for the deployment to be executed. This is especially useful when you want to avoid deploying during peak business hours or outside of approved windows, or if you need to coordinate deployments with other teams.

How to Schedule a Deployment

Step 1: After your deployment has passed all required approvals and checks, navigate to the deployment pipeline or release page in your deployment tool (such as Azure DevOps).

Step 2: Look for an option labeled Schedule, Schedule Deployment, or a calendar/clock icon (the exact label may vary depending on the tool).

Step 3: Click the Schedule option. A scheduling dialog will appear, allowing you to select the desired date and time for the deployment to start.

Step 4: Select the preferred deployment date and time (e.g., 03/06/2026, 7:00 PM). Make sure to confirm the time zone setting to avoid confusion.

Step 5: Save or confirm your schedule. The deployment will now be queued and automatically executed at the specified time.

Step 6 (Optional): Notify relevant stakeholders about the scheduled deployment time, especially if coordination with other teams is required.

For example, after the deployment is approved, the release manager can set the deployment to begin at 3:00 AM on a Sunday, ensuring that the process starts at a low-traffic time. This scheduling capability provides flexibility, helps prevent disruptions, and supports better planning for both IT and business stakeholders.

That is how we use and change like before in LCS when it is possible to do it manually and schedule from there, or now it’s all the way from DevOps.

Best Practices for Pipeline Organization

Based on years of F&O implementations, here's what works best:

xpp-build-validation.yml , Purpose Validation PRs, Trigger for PR, Deploy to None

xpp-ci-uatonly.yml, Purpose Daily Integration, Schedule, UAT only

xpp-ci.yml, Full Release, Manual + Schedule, UAT => PrePROD => Production

Use Build Number as Version

Always use the build number format YY.MM.DD.Rev as your model version. This creates a clear audit trail.

Create Both Package Formats

Enable both CreateCloudPackage and CreateRegularPackage. This gives you flexibility during the LCS-to-PPAC transition period.

Set Realistic Timeouts

F&O deployments can take 1–3 hours. Set timeoutInMinutes: 360 (6 hours) to avoid premature failures.

Publish Logs on Failure

Use condition: succeededOrFailed() on log publishing tasks. You'll need those logs to troubleshoot build failures.

Use Service Connections per Environment

Create separate service connections for each environment (e.g., UAT-SPN, PROD-SPN). This enforces least-privilege access.

How It All Works Together: End-to-End Flow

Let me walk you through what happens when you trigger the xpp-ci.yml pipeline:

Build Stage Starts

Agent provisions (30 seconds)

NuGet packages restore from Azure Artifacts (2 minutes)

Model version updates to build number (5 seconds)

X++ compilation via MSBuild (10–15 minutes)

Deployable packages created (2 minutes)

Artifacts published (1 minute)

UAT Deployment Stage Starts

Waits for approvals (if configured)

PAC CLI installs (30 seconds)

Authenticates to UAT environment (10 seconds)

Package deployment begins (60–120 minutes)

Deployment completes, services restart

Production Deployment Stage Waits

Pipeline pauses for manual approval

Approvers receive notification

Approvers review deployment logs, UAT testing results

Approvers approve (or reject)

Production Deployment Starts

Same process as UAT

Deploys to Production environment (60–120 minutes)

Pipeline completes

Total time: Build (20 min) + UAT (90 min) + Approval (manual) + Prod (90 min) = 3.5+ hours

In the past, the process of creating a release candidate was mandatory in Lifecycle Services (LCS), which enforced certain deployment steps and checkpoints. Now, as demonstrated here, we can replicate that workflow directly in Azure DevOps pipelines. This gives teams greater flexibility and control. Importantly, while not recommended, Azure DevOps allows you to bypass traditional stages—such as approvals or UAT testing—and push builds straight to production as long as you have a valid YAML pipeline configured. This means you could trigger a deployment to production without any manual interventions or testing phases if you choose, though this approach carries significant risks and should only be considered in exceptional cases; like we used to do maybe as quick hotfix XPO in Prod in USR layer for AX 2012 right ? 😊

Let's keep building!

Automating Environment Management with YAML, PAC CLI & PowerShell

One of the most powerful capabilities of the Unified ALM approach is the ability to automate environment lifecycle operations directly from your Azure DevOps pipelines. No more manual clicks in PPAC—you can create, copy, and configure F&O environments entirely through code.

In this section, we'll cover:

Creating brand new Unified Experience environments (UDE, USE) via YAML

Copying existing environments with full or minimal transaction data

Post-copy automation scripts

Leveraging Power Platform Admin Connector V2 for advanced scenarios

Creating a New Unified Experience Environment

With PPAC, you can provision F&O environments directly from pipelines using PowerShell.

Option : PowerShell Admin Module

For more control, use the Microsoft.PowerApps.Administration.PowerShell module.

You have a good article from my colleague at Dynagile, Youssra, you can double check it here on this link : Automating the Creation of Dynamics 365 F&O Unified Developer Experience (UDE) Environments - Dynagile Consulting , you have the YAML and pipelines we have built internally in order to create UDE, and manage it also post creation (like put it to an environment group, put it as managed Dataverse, give access to the end users that requested the environment). Of course, you can adapt it in your context and even create USE and UPE like this. Same thing in general. As you checked my extensive article about Unified Experiences, you have the Powershell command that you can launch. I like this Powershell way to create F&O environments in PPAC, as it gives me more arguments and things you can do normally in the UI of PPAC. (like not possible to create UDE at first place)

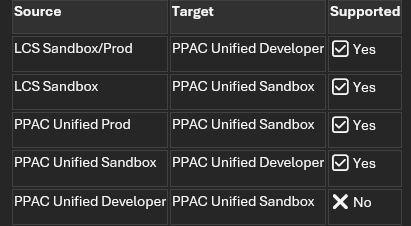

Copying Environments: Full Copy vs Less Transaction Copy for F&O

Here you can check this article in the Microsoft Learn where you have the Powershell, good part is they are using more Service Principal to connect to Power Apps to init the copy. From my previous part before, I don’t think you can create (yet) the UDE or USE in YAML pipelines directly in the same type of authentication as you need to have a real human as SysAdmin or Environment Admin I would say to connect to F&O and not a SPN. But for just launching a copy in PPAC, as soon as your SPN have enough rights, afterall after the copy only the environment admin will have access to F&O, same behavior as LCS.

Tutorial: Perform a transaction-less copy between environments - Power Platform | Microsoft Learn

Again same thing you can do for backup/restore using Powershell in YAML : Tutorial: Backup and restore unified environments - Power Platform | Microsoft Learn

Supported Copy Scenarios:

💡 Important: Always copy from higher environments (Prod/Sandbox) to lower environments (Dev). Copying from Dev to Sandbox/Prod is not supported. Change compare to LCS here as a Tier1 it was possible to do a .bacpac and reimport it to a Tier2.

Power Platform Admin Connector V2 (Advanced)

For more complex scenarios, use the Power Platform for Admins V2 connector, available in:

Power Automate (no-code/low-code)

.NET SDK (for custom C# applications) and of course into a YAML pipelines for DevOps !

Azure Logic Apps (for enterprise integrations)

Use Cases for Admin Connector V2

Approval workflows: Trigger environment copy only after Change Advisory Board (CAB) approval

Scheduled refreshes: Daily/weekly UAT refresh from Production

Monitoring: Track environment health and send alerts

Governance: Enforce naming conventions and tagging

Example: Power Automate Flow for Copy Approval

Trigger: Manual trigger or scheduled recurrence

Action: Send approval request to CAB members

Condition: If approved

Action: Use "Copy Environment" action from Power Platform for Admins V2 connector

Action: Send notification when copy completes

You can double check with samples / examples in my GitHub project where I have again put all my YAML files (like here the copy part)

Either way you can also a complete end-to-end flow where you can just create the environment, deploy the latest code and then maybe copy : all into 1 YAML.

Best Practices for Environment Automation :

Use LTC for frequent refreshes

Saves time and storage. Only use FullCopy when you need complete transaction history.Set realistic timeouts

Environment copies can take 2–6 hours depending on database size. Set timeoutInMinutes: 480 (8 hours).Implement approval gates

Use Azure DevOps Environments with approvals for production copies.Store scripts in Git

Keep all PowerShell scripts (copy, post-copy) in your /Scripts folder and version them.Notification on completion

Use Power Automate, Teams webhooks, or email notifications to alert when long-running operations complete.Monitor environment health

Use Power Platform for Admins V2 connector to track environment status and capacity.

It's crucial to ensure that the service principal (SPN) used in your YAML pipelines is properly registered ahead of time. This can be accomplished by running pac admin application register --application-id, which registers your application ID with the Power Platform admin tools. You can find detailed instructions for this process in the Microsoft Learn documentation. Taking this step guarantees that your SPN has the necessary permissions and is recognized for automation tasks in your environment. Don’t forgot to put the SPN as Power Platform Admin role too in Entra. (Including to put it as Sys Admin and S2S Apps in all related Dataverse)

I think you have seen a lot of things now, as you can use Azure DevOps could be your tower control via YAML pipelines for everything concerning your lifecycle of your environments in general, code for sure, but not until that : DataALM, copy, creation and so on ! All that without manual actions for sure and all by API first !

Let's keep building!

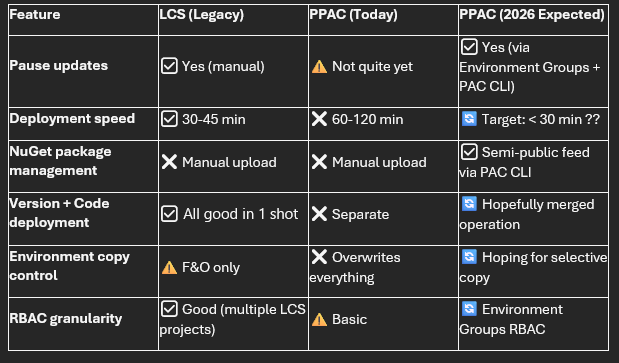

⚠️ Current Limitations & Expected Microsoft Enhancements (2026)

While the Unified ALM experience brings F&O closer to modern DevOps practices, there are still gaps compared to what we had in LCS—and even compared to what Power Platform developers enjoy today. Let me be transparent about what's missing and what Microsoft is working on. I hope I can delete this sub-chapter at some point !

1. Pause Strategy for Updates (Coming normally this March 2026)

Current Problem: In LCS, we could pause updates. Now in PPAC era world, not yet.

What's Coming: Microsoft is working on Environment Groups in PPAC for F&O specific rules, expected to roll out in March 2026. This will allow you to:

Group multiple environments logically (e.g., "Production Environments", "Dev Sandbox Environments")

Apply pause strategies at the group level rather than per environment

Manage pauses via PAC CLI and Power Platform Admin APIs

Automate pause management directly from Azure DevOps YAML pipelines

Update : At least now we have the API to handle it !

Expected YAML Example (future and just as a conception from me ! AS it will be great to manage the pause from DevOps too as soon as your Prod is now on the latest GA version and you just want to already pause the next one, like the pattern of taking only 2 versions per year and not the 4):

1 - task: PowerShell@2

2 displayName: 'Pause Updates for Environment Group'

3 inputs:

4 targetType: 'inline'

5 script: |

6 pac admin environment-group pause `

7 --group-id "production-group" `

8 --pause-duration 3 `

9 --reason "Fiscal year-end freeze"

Additional benefits:

Environment Group RBAC: More granular security roles and permissions within PPAC as in PPAC you could have multiple UPE for different geography and give access only for certain people for this group and not the other one.

Centralized governance: Apply DLP policies, compliance tags, and update windows consistently across groups

💡 My take: This is one of the most requested features from the community. API-first approach means we'll be able to script it from day one.

2. Slow Deployment Times in PPAC

Current Problem: Deployments to Unified Production Environments (UPE) via PPAC are significantly slower than they were in LCS:

PPAC today: 1–2 hours for a typical code deployment

LCS average: 30–45 minutes for the same package – as for Prod I would say. Sandbox Tier2 was also mostly 1h too.

This is a pain point for teams trying to implement rapid CI/CD cycles or needing to deploy urgent hotfixes.

Why is it slower? The unified platform architecture means deployments now touch both F&O and Dataverse layers, and Microsoft is still optimizing the deployment orchestration.

What Microsoft is working on:

Performance improvements to the deployment engine

Better parallel processing of deployment steps

Optimized database synchronization

Target: Match or beat LCS deployment times – hope beat 😊

Workaround for now:

Schedule deployments during off-peak hours (e.g., overnight)

Use timeoutInMinutes: 360 (6 hours) in your YAML to avoid premature timeout failures

Plan for longer deployment windows in your release schedules

💡 My hope: Once Microsoft optimizes this, we'll see sub-30-minute deployments consistently. That would make PPAC truly superior to LCS.

3. Semi-Public NuGet Feed for X++ Packages (Future)

Current Problem: As we covered earlier in the NuGet section, today you must:

Download X++ compiler tools and reference packages from LCS Shared Asset Library

Manually upload them to your Azure Artifacts feed

Update packages.config with exact version numbers

Manage storage limits (2 GB free tier)

This is manual, error-prone, and time-consuming.

What Microsoft is working on: A semi-public NuGet feed hosted by Microsoft that contains all F&O compiler and reference packages. This means:

No manual uploads: Just reference the Microsoft feed in your nuget.config

Version-based retrieval: Specify the F&O version (e.g., 10.0.47) and PAC CLI pulls the correct packages automatically

Multi-version builds: Easily compile the same codebase against multiple F&O versions in a single pipeline

Expected future YAML example:

1 variables:

2 FOVersion: '10.0.47' # Change this variable to target different versions

3

4 steps:

5 - task: PowerShell@2

6 displayName: 'Restore X++ Packages from Microsoft Feed'

7 inputs:

8 targetType: 'inline'

9 script: |

10 pac nuget restore `

11 --package-type FinanceOperations `

12 --version $(FOVersion) `

13 --output $(NugetsPath)

This would make multi-version testing and compatibility validation trivial—something that's nearly impossible today without maintaining multiple artifact feeds.

💡 My take: This is a game-changer for ISVs and partners who need to test against multiple F&O versions. It also aligns with the "API-first" and "automation-first" philosophy of Unified ALM.

4. No Merge Package + Version Update in One Operation

Current Major Limitation: Today in PPAC, you cannot:

Schedule an F&O platform/application version update via DevOps

Combine a version update with a code deployment in a single operation

Real-world scenario: Let's say I want to:

Update my UPE from 10.0.45 to 10.0.47

Deploy a hotfix package immediately after or even at same time, as it was done in LCS via the Release candidate option as it was deploying the custom code and version as snapshot.

What I have to do today:

Wake up at 3:00 AM (or whenever the maintenance window starts)

Manually trigger the version update via PPAC UI

Wait 1–2 hours for the update to complete

Then manually trigger my Azure DevOps pipeline to deploy the code package

Wait another 1–2 hours for deployment

This is not scalable, not automatable, and frankly, not acceptable for modern DevOps.

What's missing:

PAC CLI command like pac application update --version 10.0.47 --package mycode.zip --environment UAT

YAML task like PowerPlatformMergeAndDeploy@1 that handles both operations

Comparison to Dataverse: As you'll see in the next section, we can update recently Dynamics 365 apps (Dual Write, Customer Service, etc.) via PAC CLI without manual intervention.

💡 My hope: Microsoft will hopefully introduce a unified deployment task that merges platform updates and code packages into a single, orchestrated operation—ideally by mid-2026 or even before… 😊

Workaround for now:

Decouple version updates from code deployments

Maintain separate maintenance windows

Use Power Automate to trigger the pipeline automatically after detecting version update completion (polling-based, not ideal) or again by controlling the msprov operation history table like explained before for the notification.

5. Warning: Environment Copy Overwrites Everything

This is something that caught many teams off guard when they first started using Unified Experiences.

What happens when you copy a UPE or USE to a UDE:

Real-world disaster scenario:

Developer has been working on custom Power Apps and Flows in their UDE (unmanaged solutions)

Admin triggers a copy from Production (UPE) to refresh the dev environment

Copy completes successfully

Developer's unmanaged solutions are gone—only the managed solutions from Production remain

If the developer didn't export their solution to Git before the copy, their work is permanently lost or I would say very not easy to do or rework.

Why does this happen? Unlike LCS (which only touched F&O), PPAC environment copies are Dataverse-native operations. The entire Dataverse database is restored from the source, including solution layers.

Best Practices to Avoid Data Loss:

Always export unmanaged solutions before a copy operation:

Store all F&O code in Git including all your Power Platform custom components from solution (never rely on code only in the environment)

Automate post-copy redeployment:

Communicate clearly with the team before triggering copy operations

Use naming conventions to distinguish dev work from production code

This affects CRM projects too: This is the same challenge faced by pure Dynamics 365 CE/CRM projects. The Power Platform community has been dealing with this for years. Some strategies they use:

Solution segmentation: Keep development work in separate solutions that aren't part of production

Git-first development: Export to Git after every significant change

Scheduled backups: Automated nightly exports of unmanaged solutions

Communication protocols: Slack/Teams notifications before environment operations

💡 My call to Microsoft: We need a "selective copy" option—e.g., "Copy F&O data but preserve target Dataverse solutions" or "Copy database but not solution layers". This would solve a huge pain point.

Summary: What's Working vs. What's Missing

My Recommendations

For teams migrating to PPAC today:

✅ Accept that deployments will be slower—plan your release windows accordingly

✅ Over-communicate environment copy operations to your team

✅ Implement automated solution backups before any environment operation

✅ Keep everything in Git—never trust the environment as the source of truth

✅ Monitor Microsoft's roadmap closely and test preview features early

For Microsoft Product Group (if you're reading this 😊):

🙏 Prioritize deployment performance

🙏 Give us a PAC CLI command to update F&O versions programmatically

🙏 Consider "selective copy" options to protect development work

🙏 Deliver the semi-public NuGet feed ASAP—this will eliminate so much friction

🙏 Keep the "API-first" philosophy—everything in the UI should be scriptable via PAC CLI

Despite these limitations, the trajectory is clear: Microsoft is moving toward a fully unified, API-first, automation-ready ALM platform. The PPAC experience will eventually surpass what LCS offered—we're just in the awkward transition phase right now.

In the next section, we'll look at the bright side: how to automate Dynamics 365 app deployments (Dual Write, Customer Service, Field Service) via PAC CLI—something that was impossible in the LCS world.

Let's keep pushing forward! 💪

Automating Dynamics 365 App Updates with PAC CLI

The Journey from "Not Possible" to "Fully Automated"

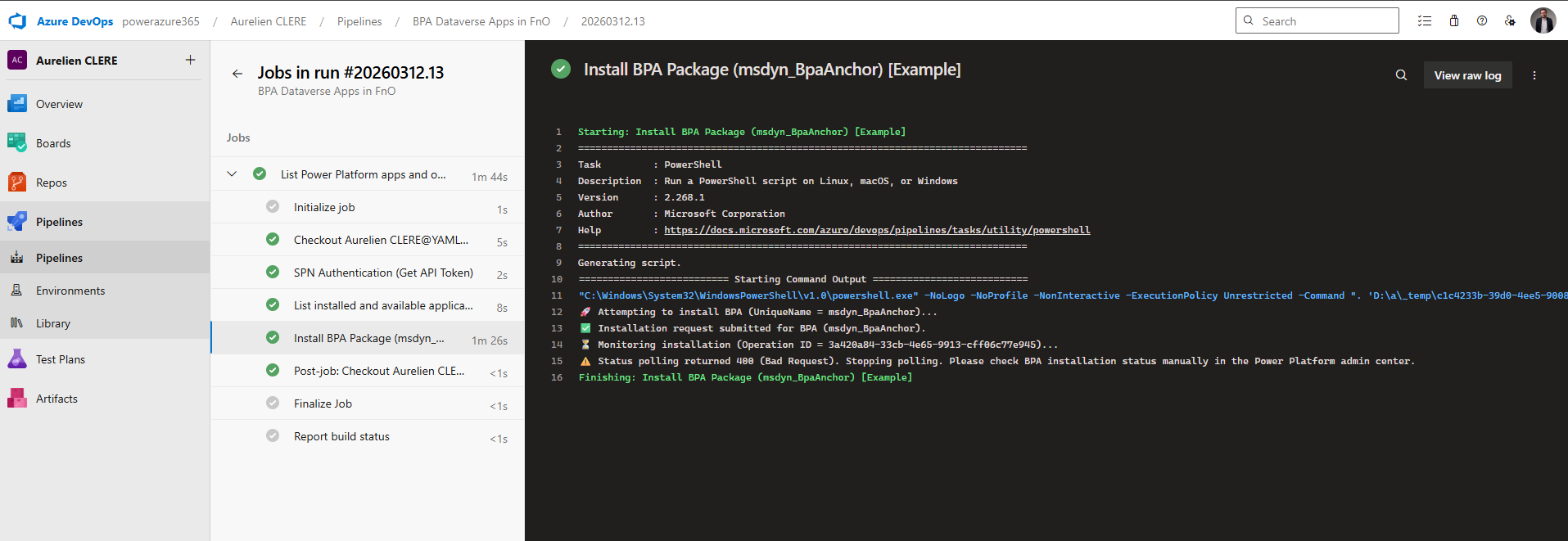

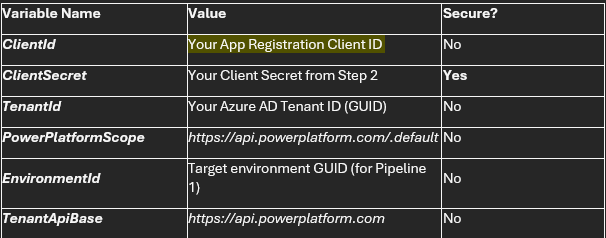

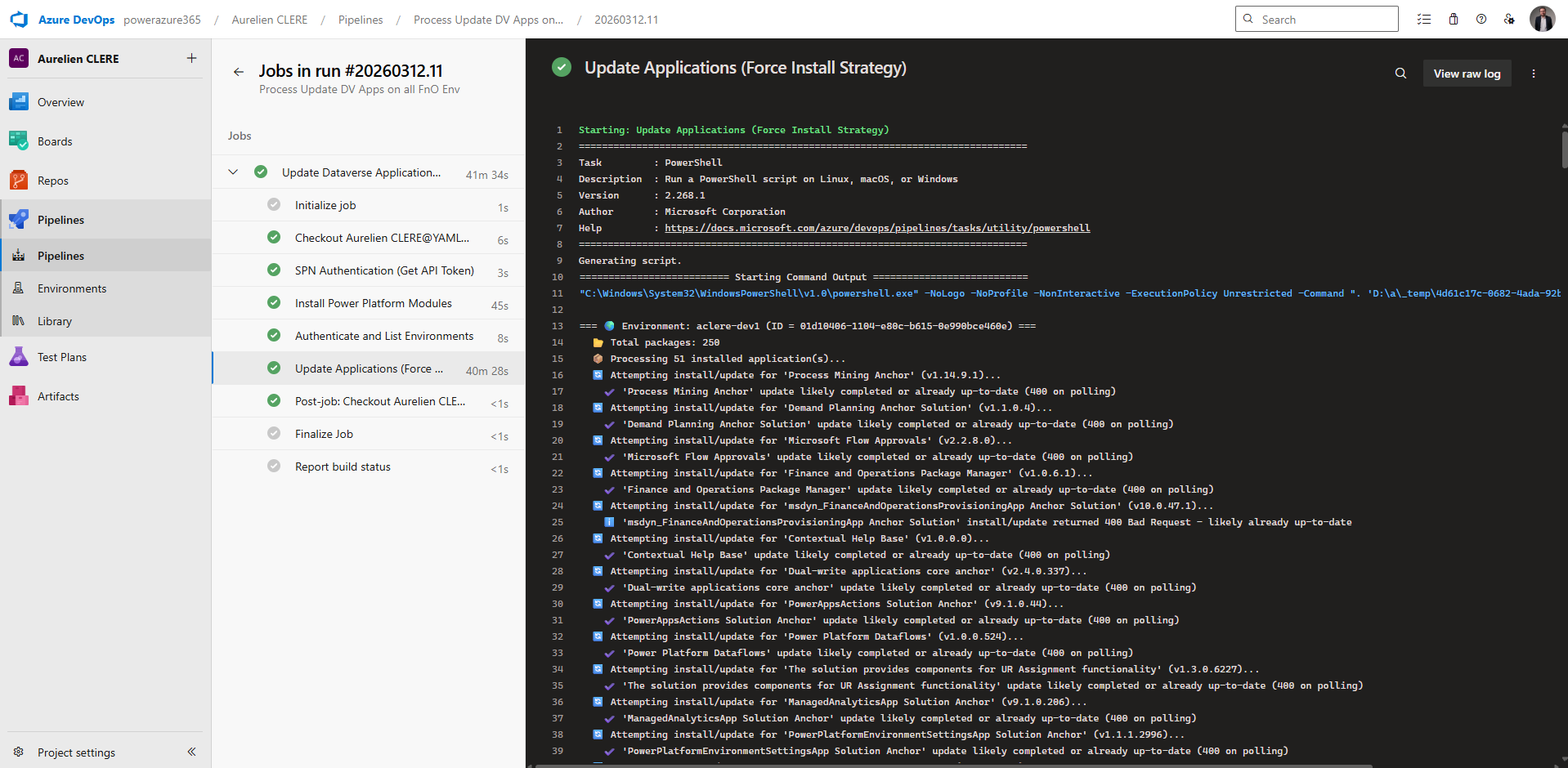

In earlier versions of this article, I stated that automating Dynamics 365 application updates using PAC CLI was not yet possible—particularly in EMEA regions. I'm excited to share that I've since developed a working solution that enables full automation of Dynamics 365 app installations and updates across all your Power Platform environments using YAML pipelines, Service Principal authentication, and the Power Platform API. Once again the script will be in the GitHub project link at the very beginning of the article in which I will upload all the samples I have made for you !